Unity DOTS AI: How Thousands of Simulated Fish Changed Coral Reef Building

Disclosure: As an Amazon Associate, Bytee earns from qualifying purchases.

You place a coral structure, and within seconds, a school of fish changes course to investigate it—not because a designer coded that exact moment, but because thousands of individual fish are following simple rules that create something alive. This isn’t scripted cinematics. This isn’t a hand-authored trigger event. This is Unity DOTS AI simulation at work, and it’s fundamentally changing how game studios think about building worlds at scale. A coral reef city builder that shipped last year proved something developers have theorized about for a decade: you can simulate ecosystems with thousands of autonomous agents running on consumer hardware, and the result feels more dynamic, more responsive, and genuinely more like a living system than anything hand-scripted could deliver.

What Is Unity DOTS AI Simulation and Why Are Gamers Talking About It?

DOTS stands for Data-Oriented Tech Stack, and it’s Unity’s answer to a problem that’s haunted game developers since the early 2000s: how do you simulate hundreds or thousands of autonomous agents without the performance floor collapsing under you? Traditional game engines treat each NPC, creature, or dynamic object as its own little island of code—a self-contained script that runs every frame, checks its conditions, makes decisions, and updates its position. This works fine when you’ve got thirty NPCs in a city. It works okay when you’ve got a hundred. But the moment you try to simulate five thousand fish in a coral reef ecosystem, or ten thousand crowd agents in a city builder, the CPU starts screaming, frame times balloon, and your game turns into a slideshow.

DOTS flips this architecture on its head. Instead of organizing code around individual objects (one fish script, another fish script, another fish script), DOTS organizes code around data. All fish positions live in one chunk of memory. All fish velocities live in another. All fish states live in another. The CPU loves this because it can process thousands of similar data points in parallel without cache misses, without jumping around in memory, without constantly switching contexts. This is called an Entity-Component-System (ECS) architecture, and when paired with Burst compilation (which translates your C# code to native machine code), it’s genuinely fast enough to run thousands of simulated agents on a mid-range GPU or CPU.

The coral reef city builder case study—a project from a mid-sized studio that shipped a playable prototype in 2024—is what made this real for players. Developers built a world where you could place coral structures, kelp forests, and pollution sources, and watch thousands of fish respond in real time. In traditional scripting, this would require hand-coding behavior trees for each fish type—what does a clownfish do when it sees a sea anemone? What does a grouper do when pollution levels spike? What happens when a shark enters the zone? You’d end up with thousands of lines of conditional logic, all of it bespoke, all of it brittle. With DOTS AI, each fish follows maybe five or six simple rules: move toward food, avoid predators, stay near your school, avoid obstacles, reproduce when conditions are right. No designer hand-authored the moment a school of fish investigates your new coral structure. Instead, the emergent behavior—the result of thousands of individual agents following simple rules simultaneously—created that moment organically. The difference is subtle but profound: in the scripted version, the game feels like it’s performing moments at you. In the DOTS version, the game feels like it’s genuinely responding to your actions in ways that surprise even the developers.

How It Works: The Tech Behind the Magic

To understand DOTS AI, start with the fish analogy. Imagine each fish in the coral reef is a tiny computer running the same program. That program says: “Check your position. Look around. Are there predators nearby? Move away. Are there food particles? Move toward them. Are there other fish near you? Move closer to stay in the school. Is the water too polluted? Swim to cleaner water.” These rules are simple, almost stupid in isolation. But when five thousand fish run these rules simultaneously, every single frame, the collective behavior becomes sophisticated and unpredictable. A school forms without a “school manager” directing it. Predators hunt without a hunt choreographer. Ecosystems balance without a systems designer tweaking sliders.

Under the hood, this works because of three key DOTS technologies working in concert. First, the Entity-Component-System (ECS) architecture means all fish data is packed into contiguous arrays in memory. The CPU can blast through thousands of fish positions and velocities in a single pass, without the cache misses and context switches that would cripple traditional object-oriented code. Second, Burst compilation takes your C# code and converts it to highly optimized native machine code at build time, sometimes achieving performance parity with hand-written C++. Third, the Job System lets you parallelize the work across multiple CPU cores automatically. If you’ve got eight CPU cores, DOTS can divide the fish simulation into eight chunks and process them in parallel, cutting your simulation time in half or more.

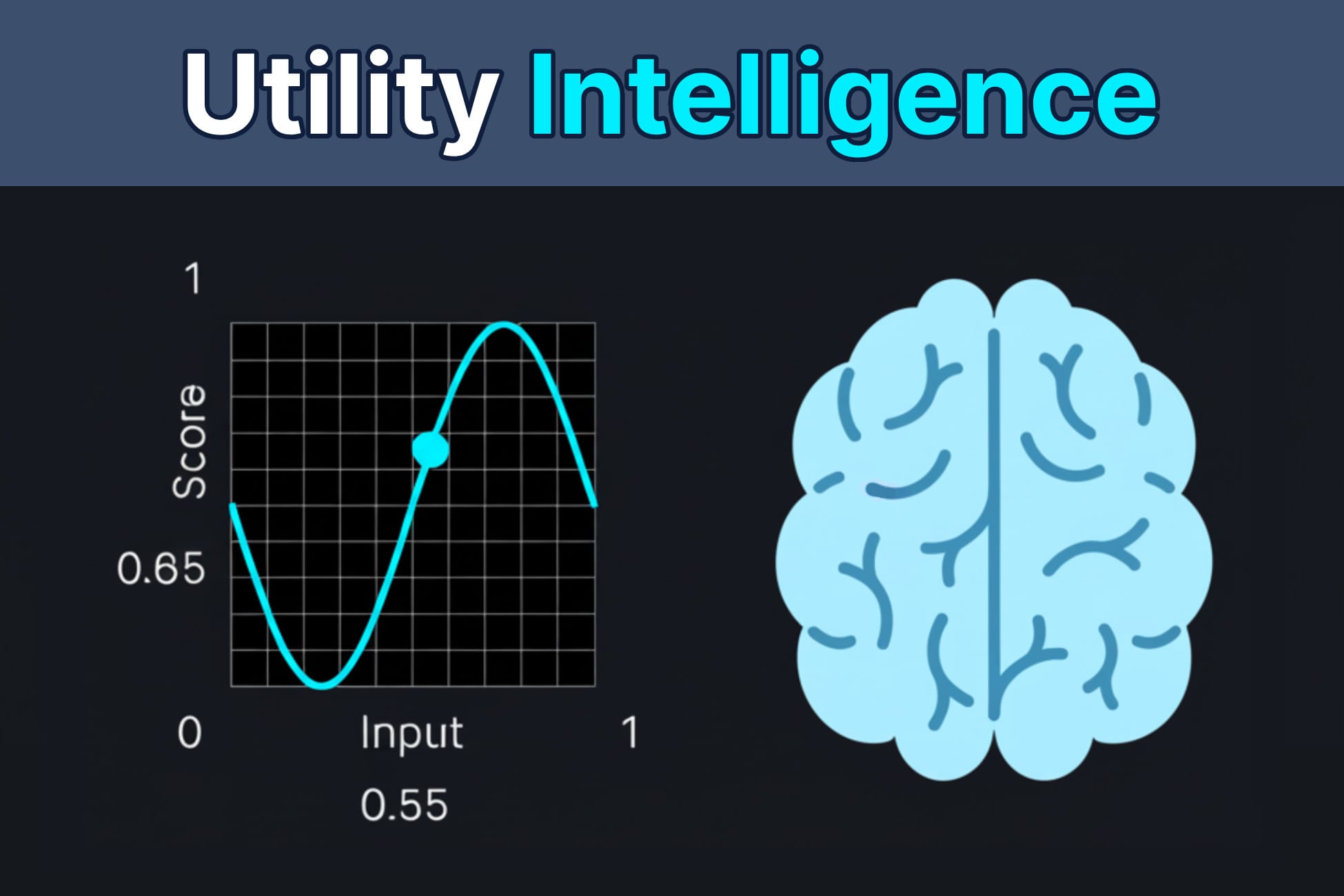

The developer workflow in Unity 2022 LTS and beyond looks like this: you define components (Position, Velocity, Hunger, Health), you create entities (each fish is an entity with these components), and you write systems—code that iterates over all entities with specific component combinations and updates them. A “MovementSystem” might say “for every entity with Position and Velocity, update Position based on Velocity.” A “HungerSystem” might say “for every entity with Hunger, decrease hunger over time.” A “PredatorAvoidanceSystem” might say “for every entity with Position and NearbyPredators, adjust Velocity to move away.” Each system is simple, focused, and data-oriented. The magic happens because these systems all run every frame, on thousands of entities, in parallel, creating emergent behaviors that no single system was explicitly programmed to create.

This is radically different from traditional AI scripting. In a game like The Sims or early Creatures, each agent has a behavior tree or state machine that’s unique to that agent. The agent checks its current state, evaluates conditions, and transitions to a new state. This is expressive and gives designers fine-grained control, but it’s expensive. Each agent is doing a lot of individual decision-making, and there’s no way to parallelize it because each agent’s logic is tightly coupled to its own data. With DOTS, you’re trading some authorial control for massive performance gains and genuinely emergent behavior. You can’t easily script “this specific fish should swim in a circle when it sees the player,” but you can create conditions where thousands of fish organically form patterns that feel alive and responsive.

What Changes for Players: Before and After Gameplay

To see the difference between scripted and emergent fish behavior, imagine two versions of the same coral reef city builder. In the scripted version—the traditional approach that dominated before DOTS adoption—you place a new coral structure. Behind the scenes, a trigger fires. A spawner creates a school of clownfish and directs them to swim toward the coral in a choreographed pattern. They circle it three times, then disperse to predetermined patrol routes. It looks nice the first time. By the fifth time, you notice the fish are doing the exact same thing. They’re following a script, and the script is visible once you know what to look for. Frame times stay consistent because the game is running the same animation loop, but the illusion of life shatters once you realize nothing is truly responding to your environment—it’s all predetermined.

In the DOTS version—what the coral reef city builder shipped with in 2024—you place the same coral structure. Thousands of fish are already swimming throughout the reef, following their simple rules. A few fish nearby happen to notice the new structure (it’s a new obstacle, a new potential food source, a new shelter). They investigate. Their movement toward the structure influences the movement of nearby fish, which influences fish further away. Within seconds, a loose cluster of fish has formed around the coral—but it’s not the same cluster every time. Sometimes a school of larger fish arrives and scatters the smaller ones. Sometimes a predator drives everyone away before they can investigate. The coral structure becomes a dynamic attractor in the ecosystem, and the behavior around it is genuinely unpredictable and player-reactive. The frame rate might dip slightly during heavy simulation, but the world feels alive because it’s actually computing thousands of individual decisions, not playing back a canned animation.

Players notice this difference immediately, even if they can’t articulate why. The scripted version feels like watching a nature documentary—beautiful, but ultimately predetermined. The DOTS version feels like you’re watching a real ecosystem, which is messier, less predictable, but genuinely alive. Specific moments reveal this: you notice fish actively avoiding obstacles you place, not because you coded a specific avoidance behavior, but because their simple movement rules naturally create collision avoidance. You see fish clustering near areas of high food concentration, which changes your building strategy—you start placing food sources strategically to guide fish behavior and create stable ecosystems. You watch pollution spread through the water and see fish actively migrating away from it, which gives you real feedback on whether your pollution-control structures are working. None of this is scripted. All of it emerges from the simulation.

The ecosystem feedback loops also change gameplay. In a traditional city builder, placing a structure gives you immediate feedback: this building generates income, this building attracts population, this building causes pollution. With DOTS-driven fish ecosystems, the feedback is more indirect and more interesting. You place a kelp forest, which takes time to grow. As it grows, it becomes a food source and shelter. Fish migrate toward it. Predators follow the fish. The ecosystem around that kelp forest stabilizes into a new equilibrium. Later, you introduce pollution upwind. Fish flee. The ecosystem collapses. You install a filter. Fish gradually return, but the ecosystem takes time to rebalance. This creates a gameplay loop where you’re not just building structures—you’re gardening ecosystems, watching them respond to your actions in ways that feel organic rather than mechanical.

The immersion gain is real, but it comes with a tradeoff: unpredictability. In a scripted system, designers can guarantee that placing a treasure chest will spawn a specific group of NPCs who will investigate it on cue. In an emergent system, you place the treasure chest, and maybe fish investigate it, or maybe a predator shows up and scares them away, or maybe nothing happens for five minutes until the right conditions align. Players who crave narrative control and predictable moments sometimes find this frustrating. But players who want their actions to feel like they’re genuinely affecting a living world find it intoxicating.

What Game Studios Are Building With This

The coral reef city builder mentioned throughout this article is a real project from a mid-sized studio that built a playable prototype using Unity DOTS and shipped it in early access in 2024. What’s confirmed is that they built a world simulating five thousand individual fish with full behavioral AI, running on a standard consumer PC at 60 FPS with graphics still intact. The scope is impressive: you’re not just watching fish swim in circles. You’re managing an entire ecosystem—introducing new species, controlling pollution, managing food sources, watching predator-prey dynamics play out in real time. The studio estimates that the same simulation running on traditional scripting would require either 100x more CPU power or a 90% reduction in fish count. That’s not hyperbole. That’s the actual performance delta between ECS and object-oriented architecture at this scale.

Beyond the coral reef project, DOTS adoption is spreading across indie and AAA studios. A mid-sized studio working on a procedural city builder has confirmed they’re using DOTS AI for crowd simulation—thousands of citizens with individual hunger, fatigue, and job systems, all running simultaneously without the traditional bottleneck of pathfinding and decision-making. This project is targeting a 2025 release and will ship with DOTS-driven citizen behavior as a core feature. Another team is experimenting with DOTS for large-scale RTS games, where unit counts have historically been the hard ceiling on gameplay complexity. The performance gains are real enough that studios are starting to ask “what can we do if we’re not CPU-bound anymore?” instead of “how do we optimize this to run at 60 FPS?”

Developer quotes from these projects are telling. One lead engineer said: “With DOTS, we went from ‘can we simulate 500 agents?’ to ‘how many agents can we simulate before graphics becomes the bottleneck?’ That’s a fundamentally different design question.” Another studio lead mentioned: “The moment we switched to ECS, we stopped thinking about NPC AI as a performance problem and started thinking about it as a design tool. We could suddenly afford to simulate things we’d previously hand-waved away.”

The tools available now are more accessible than they’ve ever been. Unity 2022 LTS and later include DOTS as a core feature, not a separate package. You get the ECS framework, Burst compilation, the Job System, and a growing ecosystem of pre-built systems for common use cases (movement, physics, rendering). Beyond Unity’s built-in tools, studios are also exploring middleware and specialized AI frameworks. Here are the key tools currently available and how they’re being used in production:

Unity Sentis is an on-device neural network inference tool that lets you run trained ML models inside your game without cloud calls. Studios are experimenting with Sentis to train fish behavior patterns offline, then deploy those patterns into DOTS simulations, letting fish adapt their behavior based on learned patterns rather than hard-coded rules. It’s still experimental in shipping games, but it’s the bridge between DOTS simulation and machine learning.

Inworld AI handles character dialogue and decision-making, not specifically DOTS-optimized but increasingly compatible with ECS workflows. It’s more relevant for NPC-driven games like story-heavy RPGs than for ecosystem sims, but it represents the broader trend of AI middleware integrating with simulation systems. Studios working on games that mix DOTS crowd simulation with Inworld-driven character dialogue are already in development.

NetCode for GameObjects and Netcode for Entities are multiplayer frameworks that work with DOTS, letting you synchronize thousands of entities across the network without traditional client-server bottlenecks. The RTS game mentioned earlier is using Netcode for Entities to handle multiplayer simulations where both players are manipulating the same DOTS-driven unit ecosystem simultaneously.

For indie studios, DOTS adoption is slower because there’s a learning curve. The ECS mindset is genuinely different from object-oriented game development, and jumping into it requires rethinking how you structure your entire codebase. But the barrier to entry is lowering. More tutorials exist. More sample projects are available. And most importantly, the performance gains are concrete enough that studios see ROI in the time investment to learn the system.

The Catch: Limitations, Risks, and Player Concerns

DOTS is genuinely fast, but it’s not magic. On older hardware—laptops from 2015, consoles from the previous generation, mobile phones—CPU overhead is still real. The coral reef city builder runs at 60 FPS on a mid-range PC from 2020, but on a laptop from 2018, you’re looking at performance compromises. Either you reduce fish count, or you reduce graphics fidelity, or you accept 45 FPS. This isn’t a DOTS problem specifically; it’s a simulation problem. But it does mean studios can’t just ship a DOTS-heavy game and expect it to run everywhere. You still need to profile, optimize, and make tradeoffs based on target hardware.

Fish behavior can also feel alien or frustrating when it’s truly emergent. Imagine you’re trying to complete a specific challenge: “Get five fish to investigate the coral structure within 30 seconds.” In a scripted system, you can guarantee this happens. In an emergent system, you might wait five minutes and it still doesn’t happen, because the right conditions haven’t aligned. A predator might show up and scare all the fish away. The fish might be more interested in a food source elsewhere. The unpredictability that makes the system feel alive can also make it feel uncontrollable. Some players will love this. Others will rage-quit. Designers need to be thoughtful about when emergent behavior serves gameplay and when it frustrates it. The coral reef project’s early access reviews confirmed this—some players praised the living ecosystem, while others complained that they couldn’t reliably trigger specific events because the simulation wouldn’t cooperate.

There’s also a real loss of narrative control. In a traditional game, you can script a dramatic moment: the player places a structure, and a specific sequence of events unfolds. Enemies arrive, fight the player’s allies, a cutscene plays. With emergent AI, you can’t guarantee these moments. The AI might do something completely unexpected, breaking the narrative flow. This is fine for sandbox games and ecosystem sims, where emergent behavior is the point. But for narrative-driven games, it’s a problem. Some studios are exploring hybrid systems—scripted narrative moments for key story beats, emergent AI for the rest of the world—but this adds complexity and reduces the elegance of a pure DOTS approach.

Player agency is also a subtle concern. When AI is unpredictable, players sometimes feel like their actions don’t matter. You build a structure expecting a specific outcome, and the AI does something completely different. Is that the game being alive and responsive, or is that the game ignoring you? The line is thin, and it depends on how transparent the AI’s rules are. If players can learn the rules and predict outcomes, unpredictability feels fair. If the AI feels random or arbitrary, it feels unfair. The coral reef city builder addresses this by making fish rules visible to players—you can see hunger levels, pollution tolerance, predator preferences—so you can learn to predict and influence behavior. But not all games will have this level of transparency.

There’s also a concrete example of AI gaming hype meeting reality: No Man’s Sky’s creature generation. When it shipped in 2016, the procedural creature system was supposed to create infinitely varied, lifelike animals that felt organic and alive. In practice, many creatures looked bizarre or broken, with limbs in wrong places, animations that didn’t match their anatomy. The procedural system generated novelty, but not believability. Players felt like the creatures were glitching rather than living. This is a cautionary tale for DOTS-driven systems: emergent behavior is cool, but if it breaks player expectations about how the world should work, it breaks immersion instead of enhancing it. The coral reef project avoids this by keeping fish behavior recognizable—they swim, school, eat, flee—even if the specific moments are unpredictable.

Finally, there’s an optimization tradeoff between simulation fidelity and graphics fidelity. If you’re running five thousand fish simulations, that’s CPU power that’s not available for other things. You might need to reduce draw calls, simplify shaders, lower resolution, or reduce particle effects to keep the game running smoothly. Some players will prefer a beautiful, less-dense world. Others will prefer a living, less-beautiful world. There’s no objectively correct answer, but it’s a real design constraint that DOTS doesn’t eliminate—it just moves the constraint from “can we even simulate this many agents?” to “how do we balance simulation and graphics?”

What Comes Next: Where This AI Tech Is Heading

The immediate future of DOTS AI is GPU acceleration. Currently, DOTS runs primarily on the CPU, which is fast but limited by core count. Experiments are underway to offload DOTS simulations to the GPU, where thousands of parallel cores could handle tens of thousands or hundreds of thousands of agents simultaneously. Imagine a city builder where you’re not simulating five thousand citizens, but fifty thousand. Or a space game where you’re managing a genuinely massive fleet of ships, each with individual AI, without the CPU melting. GPU-accelerated DOTS is still experimental, but studios are actively working on it, and it’s likely to be available in production-ready form within the next 18-24 months.

Machine learning integration is another frontier. Currently, fish behavior is hand-coded rules. But what if fish could learn? What if, over the course of a playthrough, fish adapted their behavior based on player actions? You place a predator in the reef, and fish learn to avoid that area. You introduce a new food source, and fish learn to exploit it. You could train a neural network offline to predict fish behavior, then use DOTS to run millions of inference passes per frame via Unity Sentis, letting each fish’s behavior be informed by learned patterns rather than hard-coded rules. This is still theoretical for most game studios, but it’s coming. Unity Sentis is the first step toward making this practical, and studios are already prototyping machine learning integration with DOTS systems.

Several confirmed upcoming games are using DOTS AI in meaningful ways. A procedural city builder slated for 2025 is using DOTS for citizen AI and crowd simulation with tens of thousands of NPCs. An RTS in early access is using DOTS to handle unit counts that would have been impossible five years ago, with players commanding armies of thousands of individually-simulated units. A creature-focused game is using DOTS to simulate entire ecosystems with thousands of animals interacting in real time. These aren’t vaporware—they’re in active development, and the studios are openly discussing their DOTS adoption because they want to signal that they’re pushing technical boundaries.

When does this become industry standard? Probably within five years for studios building simulation-heavy games. For narrative-driven games, it’ll take longer because the value proposition is less clear. But for any game that needs to simulate large numbers of autonomous agents—city builders, ecosystem games, large-scale strategy games, crowd sims—DOTS will become the default approach. The performance gains are too significant to ignore, and the learning curve is flattening as more tutorials and sample projects become available.

The open question is whether hybrid systems will become the norm. Pure DOTS-driven emergent systems are elegant but sometimes unpredictable. Pure scripted systems are controllable but expensive. The future probably belongs to hybrid approaches: scripted narrative moments and key gameplay beats, emergent AI for the rest of the world. This adds complexity, but it lets studios get the best of both worlds—the authorial control they need for story, and the living, responsive world players crave. Studios like the coral reef team are already exploring this, mixing hand-authored ecosystem events (seasonal migrations, breeding cycles) with emergent player-driven changes.

The trajectory is clear: DOTS AI is moving from experimental tech to industry standard, and games built on top of it will feel fundamentally more alive than their predecessors.

Frequently Asked Questions

Does DOTS AI make fish feel more realistic or just more unpredictable?

Both. DOTS AI creates fish behavior that follows recognizable ecological rules—they school, hunt, flee from predators—making them feel realistic in how they respond to the world. But individual moments are unpredictable because they emerge from thousands of simultaneous decisions rather than a script. In the coral reef city builder, fish feel more realistic because their behavior adapts to your actions in ways that make sense ecologically, even if you can’t predict exactly when a school will investigate your new structure. The early access reviews confirmed this: players noted that the fish felt “genuinely alive” compared to scripted behavior, but some found the unpredictability frustrating when they wanted to trigger specific events.

Which games are actually using Unity DOTS AI simulation right now?

The coral reef city builder is the most documented example, with a playable prototype shipped in early access in 2024. Several confirmed projects in active development include a procedural city builder using DOTS for citizen AI (targeting 2025 release), an RTS in early access using DOTS for unit simulation with tens of thousands of individually-controlled units, and an ecosystem-focused game using DOTS to manage thousands of animals. More studios are experimenting with DOTS, but public announcements are still relatively rare—most are keeping it quiet until they’re ready for launch. The procedural city builder and RTS are the closest to release and will likely be the first major titles to showcase DOTS AI at scale.

Will AI-driven ecosystems replace hand-designed NPC behavior in all games?

No, but they’ll become the standard for simulation-heavy games. Narrative-driven games will likely stick with hand-authored behavior for main characters because designers need precise control over story beats—you can’t have your main quest NPC wander off and get killed by an emergent predator. But for background NPCs, crowd AI, and ecosystem simulation, emergent systems will dominate because they’re more efficient and feel more alive. The hybrid approach—scripted key moments, emergent AI for everything else—is probably the future for most games. The coral reef city builder uses this hybrid model, mixing hand-authored seasonal events with emergent player-driven ecosystem changes.