Fallout’s Creator Is Optimistic About AI: Hype vs. Reality

Disclosure: As an Amazon Associate, Bytee earns from qualifying purchases.

Brian Daley, the legendary designer behind the original Fallout series, recently went on record expressing optimism about generative AI’s potential to revolutionize game development. His comments sparked a wave of industry enthusiasm—the kind that tech evangelists love to amplify in their quarterly earnings calls. But here’s the thing: optimism and reality are often two different games entirely. While Daley’s vision of AI-accelerated creativity is seductive, the current state of generative AI in gaming reveals a much messier picture than the hype suggests. Between Take-Two’s quiet AI division layoffs, Capcom’s strategic rejection of generative AI in actual games, and a growing chorus of artists and developers sounding alarm bells, the industry is at a critical inflection point—one that demands skepticism as much as hope.

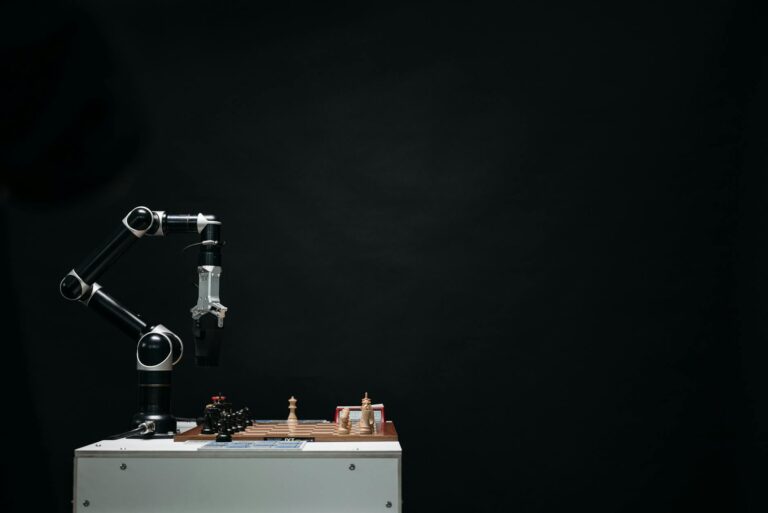

The gaming industry’s relationship with AI has always been complicated. We’ve had procedural generation, NPC behavior trees, and adaptive difficulty systems for decades. But generative AI—the kind powered by large language models, diffusion models, and neural networks trained on massive datasets—represents something fundamentally different. It’s not just smarter. It’s generative in the truest sense: capable of creating novel content from scratch, whether that’s dialogue, textures, code, or entire game mechanics. And that’s exactly what has everyone both excited and terrified.

Under the Hood: How Generative AI Actually Works in Games

Let’s cut through the marketing speak and talk about what’s actually happening when developers deploy generative AI tools. At the core, there are three main technologies at play:

Large Language Models (LLMs) like GPT-4 and specialized variants are being used to generate dialogue, narrative content, and even code for game development pipelines. These models are trained on billions of tokens of text data, learning statistical patterns about how language works. When you prompt an LLM to write an NPC’s response in a game, it’s essentially predicting the next most likely token (word fragment) based on its training. It’s not thinking; it’s pattern-matching at scale. The result can be impressive and contextually relevant, but it can also be generic, repetitive, or wildly off-tone if the prompt isn’t carefully engineered.

Diffusion Models are the technology behind tools like Midjourney and Stable Diffusion, which are being explored for asset generation—textures, concept art, even full 3D models. These models work by learning to reverse a noise-to-image process, gradually denoising random input into coherent visual content based on text descriptions. It sounds elegant, but in practice, it means you’re getting statistically average images that blend training data in ways that can feel uncanny or legally problematic (given the copyright debates surrounding training data).

Procedural Generation with Machine Learning combines traditional algorithmic generation with learned patterns. Rather than hand-coded rules for dungeon layout or NPC behavior, AI models can learn from examples and generate novel variations. This is where tools like NVIDIA ACE (Avatar Cloud Engine) come in—enabling dynamic NPC interactions powered by neural networks.

The promise is clear: developers can generate assets, dialogue, and systems faster, cheaper, and at greater scale. The reality is messier. These tools require careful prompt engineering, extensive iteration, and human refinement. A generated asset isn’t done when the AI outputs it—that’s just the starting point.

The Development Workflow Revolution (That Isn’t Really Happening Yet)

Here’s where the industry optimism gets interesting. Proponents like Daley argue that generative AI will democratize game development, allowing smaller teams to compete with AAA studios by automating grunt work like asset creation, dialogue writing, and even basic programming tasks.

In theory, this is compelling. An indie developer could describe a dungeon environment in text, and AI generates the layout and assets. An artist could sketch a character concept, and AI generates variations. A programmer could describe game logic, and AI writes the boilerplate code. This would theoretically free up human talent for higher-level creative decisions.

But the industry data tells a different story.

Roblox announced deployment of agentic AI to automate game development tasks, which sounds revolutionary until you realize that automation without quality control is just expensive chaos. Take-Two, the parent company of Rockstar Games and 2K, quietly laid off the head of its AI division along with an unspecified number of staff—a signal that the grand vision of AI-powered development pipelines hasn’t delivered ROI. Meanwhile, Capcom, one of the industry’s most technically sophisticated studios, explicitly rejected using generative AI in actual games while still exploring it for internal development efficiency. That’s a telling distinction: AI for workflow acceleration is acceptable; AI as a consumer-facing feature is viewed with suspicion.

The real story emerging is that generative AI is most useful not as a replacement for human creativity, but as an acceleration layer for tedious, repetitive tasks. Concept art iteration, placeholder asset generation, dialogue variation—these are areas where AI can genuinely reduce time-to-market. But it requires human oversight at every stage. You still need artists who understand composition and storytelling. You still need writers who can ensure narrative coherence. You still need programmers who understand systems design. What you don’t need—or so the theory goes—are people grinding away on the 50th iteration of a texture variation or writing generic NPC barks.

The Reality Check: Is This Just Hype? Will AI Replace Developers?

The pessimistic take has become increasingly common, and for good reason. Here’s what we’re actually seeing in the wild:

Quality Control Issues: Generative AI outputs are statistically average by design. They’re trained to produce what’s most likely based on training data, which means they tend toward the generic. This works fine for procedural filler content, but it’s antithetical to the distinctive visual and narrative styles that define memorable games. A game that looks like it was generated by averaging every game ever made is, by definition, not distinctive.

Copyright and Legal Chaos: The training data for most generative models includes copyrighted material, leading to lawsuits and regulatory uncertainty. If an AI model is trained on millions of copyrighted artworks and game assets, and then produces something that’s a statistical blend of that data, who owns the output? This remains unsettled legally, and it’s creating risk for studios that rely on generative AI.

The Efficiency Paradox: While AI can generate content quickly, integrating that content into a cohesive game requires significant human work. You’re not saving development time if you’re spending the same amount of time iterating, refining, and fixing AI-generated assets. Some studios are finding that the overhead of prompt engineering, quality control, and integration actually increases project timelines rather than reducing them.

Market Saturation: If every indie developer has access to the same generative AI tools, the market becomes flooded with superficially similar content. The democratization of tools doesn’t democratize success—it just increases competition from quantity rather than quality.

The Asia Angle: Interestingly, Asia’s gaming market has shown more openness to AI-generated content than the West. Seoul’s city government is exploring AI-generated landmarks in video games; regional studios are more experimental with the technology. This suggests a cultural and regulatory divide that could reshape the global gaming landscape. Western players and developers remain more skeptical, viewing AI as a potential threat to authenticity and human creativity.

Will AI replace developers and artists? Almost certainly not—but it will reshape roles. The demand for artists who can prompt-engineer diffusion models or refine AI-generated assets may rise, while demand for rote asset production falls. The programmer who can design intelligent systems using AI tools will be more valuable than the one grinding out boilerplate code. This is a shift, not an apocalypse, but it’s a shift that creates real disruption in the industry.

The Uncomfortable Truth About Optimization and Creativity

Here’s where I diverge sharply from Daley’s optimism: games are fundamentally about human expression. The most memorable games—from Outer Wilds to Disco Elysium to the original Fallout series—succeed because they reflect the specific vision and constraints of their creators. Constraints breed creativity. A small team making a game on a budget makes different, often more interesting decisions than a well-funded team with unlimited resources.

Generative AI is an optimization tool. It reduces constraints. It makes it easier to generate more content faster. But optimization isn’t the same as innovation. A game that uses AI to generate hundreds of hours of procedural content might be technically impressive but emotionally hollow. There’s a reason roguelikes with handcrafted levels are more compelling than roguelikes with purely procedural ones—human intention creates meaning.

This doesn’t mean AI has no place in game development. It absolutely does. AI-driven NPC behavior that responds dynamically to player actions can create emergent storytelling moments. AI-assisted tools that accelerate the creative process without replacing creative judgment can be valuable. But there’s a massive gulf between “AI as a tool that amplifies human creativity” and “AI as a replacement for creative decision-making.”

The industry is slowly learning this distinction. Capcom’s explicit rejection of generative AI in consumer-facing games while exploring it for development efficiency is the smart middle ground. You use AI where it genuinely adds value without compromising the human vision at the core of the game.

Looking Forward: The Actual Future of AI in Gaming

Here’s what I think will actually happen, stripped of hype:

In the next 3-5 years, we’ll see increased adoption of AI-assisted development tools—not as magic bullets, but as productivity multipliers. Studios will use AI for texture variation, dialogue generation, code scaffolding, and playtesting simulation. The studios that do this best will be those that treat AI as augmentation, not replacement. Those that try to fully automate development will produce forgettable content and either pivot or fail.

We’ll also see regulatory pressure and copyright litigation reshape how AI training works in the gaming industry. The current Wild West of training on unlicensed data will tighten. This will make generative AI slightly less capable but more legally defensible.

The market will likely bifurcate: AAA studios will use AI for efficiency gains, reducing overall headcount but maintaining creative control. Indie developers will face pressure to adopt AI tools or get left behind competitively, but the most successful indie games will still be those where the human vision is unmistakably present. And smaller studios that can’t afford the infrastructure for AI-driven development might actually find their niche as “human-made” becomes a selling point.

The companies that laid off their AI divisions—like Take-Two—aren’t rejecting AI. They’re rejecting the idea that AI is a standalone department with an autonomous mandate. The future looks more like AI as a distributed toolkit integrated into existing development workflows, not as a separate innovation factory.

The Bottom Line

Brian Daley’s optimism about generative AI isn’t unfounded, but it’s incomplete. AI will absolutely change game development. It will accelerate production, enable new types of procedural content, and create opportunities for smaller teams. But it won’t replace human creativity, and the studios that treat it like a silver bullet will regret it.

The real question isn’t whether AI will transform gaming—it will. The question is whether the industry will be smart enough to use it as a tool rather than a substitute for vision. Early signs suggest they’re learning, but slowly.

FAQ: Generative AI in Game Development

How does generative AI actually work in game development?

Generative AI uses machine learning models trained on massive datasets to produce new content based on text prompts or other input. In games, this includes dialogue generation via LLMs, asset creation via diffusion models, procedural content generation via learned patterns, and code generation via specialized models. The output is always probabilistic and requires human refinement—it’s not magic, it’s statistical prediction at scale.

Will AI replace game developers and artists?

Not replace, but reshape. Demand for traditional asset production will decrease, while demand for people who can effectively use AI tools, manage quality control, and maintain creative vision will increase. It’s a shift, not an extinction.

Is generative AI available for indie developers?

Yes. Tools like ChatGPT, Midjourney, Stable Diffusion, and various AI-assisted game development platforms are accessible to indie teams. The barrier is more about knowledge and integration than access. However, relying on AI without clear creative direction often produces mediocre results.

Does using AI-generated content require internet connectivity?

Depends on the tool. Cloud-based services like ChatGPT and Midjourney require internet. Local models like Stable Diffusion can run offline, though they require significant compute resources. Most studios use a hybrid approach.

Why did Take-Two lay off its AI division if AI is the future?

Because standalone AI divisions with autonomous mandates don’t generate ROI. The future of AI in gaming isn’t a separate department—it’s distributed AI tools integrated into existing development workflows. Take-Two didn’t reject AI; they rejected the organizational structure.

Are there games that have successfully used generative AI?

Not many yet that are purely AI-driven and critically successful. Most successful implementations use AI as an acceleration layer for specific tasks, not as the core creative engine. This is still an emerging area, and the best practices are still being established.

What’s the copyright situation with AI-generated game content?

Legally unsettled. If an AI model was trained on copyrighted material and produces output, who owns that output? Current litigation is establishing precedent, but expect tighter regulations around training data in the coming years. This will likely make generative AI slightly less capable but more defensible.