AI Gaming & Data Drift: Why On-Device Inference is a CISO Risk

Disclosure: As an Amazon Associate, Bytee earns from qualifying purchases.

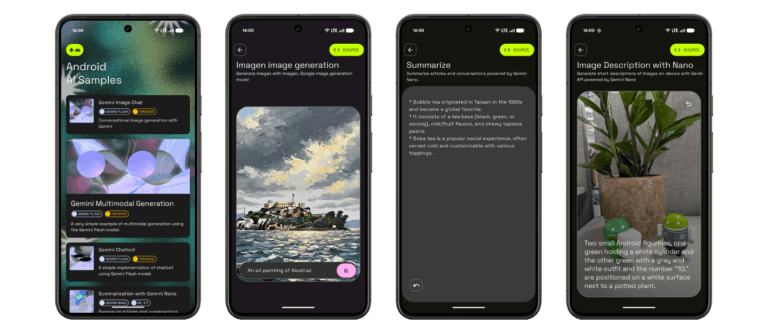

When Rocksteady Studios shipped Batman: Arkham Knight, the AI systems that powered enemy behavior ran on-device—no cloud dependency, no latency issues, pure client-side computation. Fast forward to today, and that same approach is standard practice across the industry. But here’s the problem: those locally-running models are degrading.

Data drift is silently undermining the accuracy and security of your AI implementations, and most teams won’t discover it until something breaks in production.

What Is Data Drift and Why Should You Care?

Data drift happens when the real-world data your AI model encounters diverges from the training data it learned on. In gaming, this manifests in unexpected ways:

- An NPC decision-making model trained on typical player behavior starts failing against exploit-heavy playstyles

- A procedural generation system trained on balanced level layouts produces increasingly broken geometry as player stats evolve

- An adaptive difficulty algorithm trained on specific hardware configurations struggles on edge-case devices

- Security classifiers meant to detect cheating miss new exploit patterns because they’ve never seen them before

- Player behavior prediction models become unreliable as the meta shifts and players discover new strategies

The issue intensifies when these models live on-device. Unlike cloud-based systems that can be updated, monitored, and retrained continuously, local models become frozen the moment they ship. They can’t learn. They can’t adapt. They can only degrade.

The Five Signs Data Drift Is Already Undermining Your Security Models

1. Your NPC Behavior Becomes Predictable or Exploitable

When The Last of Us Part II shipped, Naughty Dog’s AI-driven enemy behaviors were praised for their unpredictability. Enemies adapted to player tactics in real-time. But imagine if that system never updated: players would eventually map out every possible NPC response pattern. After weeks of gameplay, exploits would emerge—angles where the AI always makes the same decision, situations where the model’s training data never covered the scenario.

That’s data drift in action. Your security model stops recognizing new exploit patterns because they don’t match the distribution of threats it was trained on.

2. Your Adaptive Difficulty System Stops Adapting

Games like Elden Ring don’t use explicit difficulty settings—instead, they rely on AI-driven systems that read player behavior and adjust challenge accordingly. A model trained on “average skill distribution” works great until players discover sequence breaks, leverage equipment in unintended ways, or optimize strategies the training data never anticipated.

When data drift occurs, your difficulty model stops recognizing novel player behavior. It thinks the player is still performing normally because the current playstyle doesn’t match any training examples. The system can’t adapt because it fundamentally doesn’t understand what it’s seeing.

3. Your Procedural Generation Creates Broken Geometry or Unplayable Spaces

Procedural generation systems in games like No Man’s Sky rely on AI models trained on “valid level configurations.” But as players progress, they encounter edge cases: unexpected terrain interactions, resource placement that violates assumptions, geometry that clips through itself. These aren’t bugs—they’re signs that your generative model is encountering input distributions it was never trained to handle.

Data drift means the system confidently generates broken content because it has no basis for recognizing that the current seed values are outside the normal range.

4. Your Anti-Cheat System Misses New Exploit Patterns

Multiplayer games like Valorant and Counter-Strike 2 rely on client-side and server-side detection to catch cheaters. Client-side models (the ones running on-device) are particularly vulnerable to data drift. They’re trained on known exploit signatures, but the moment a cheat developer invents a new attack vector, the model fails to recognize it.

Why? Because the new exploit isn’t in the training data. The model has drifted so far from covering “all possible threats” that it becomes a false sense of security. You think you’re protected; you’re actually blind.

5. Your Player Behavior Predictions Become Wildly Inaccurate

Games that use AI to predict what players will do next—whether for matchmaking, content recommendation, or monetization—rely on behavior models trained on historical data. But players evolve. Metas shift. New content changes what’s possible.

When Fortnite releases a new weapon, that weapon wasn’t in the training data. Your behavior prediction model can’t account for it. Players who adopt the new weapon will appear as outliers, and your system won’t know whether they’re high-skill players adapting or account compromises or something entirely unexpected. Data drift means your model is always one patch behind reality.

Why On-Device Inference Makes Data Drift Invisible

The real danger of local AI isn’t the technology itself—it’s the monitoring blind spot. When inference happens on-device, you lose observability.

Cloud-based AI systems generate telemetry. You can see:

- What inputs the model receives

- What predictions it makes

- How often predictions diverge from expected outcomes

- When the model confidence drops below safe thresholds

Local models? They run in silence. Your developers ship an on-device NPC decision-maker, and it executes millions of times without ever reporting back. If data drift is occurring—if the model is gradually becoming less accurate—you won’t know until players report weird behavior, exploits go viral, or your security audit flags unexpected patterns.

By then, the damage is done.

The Architecture Problem: Credentials and Untrusted Code

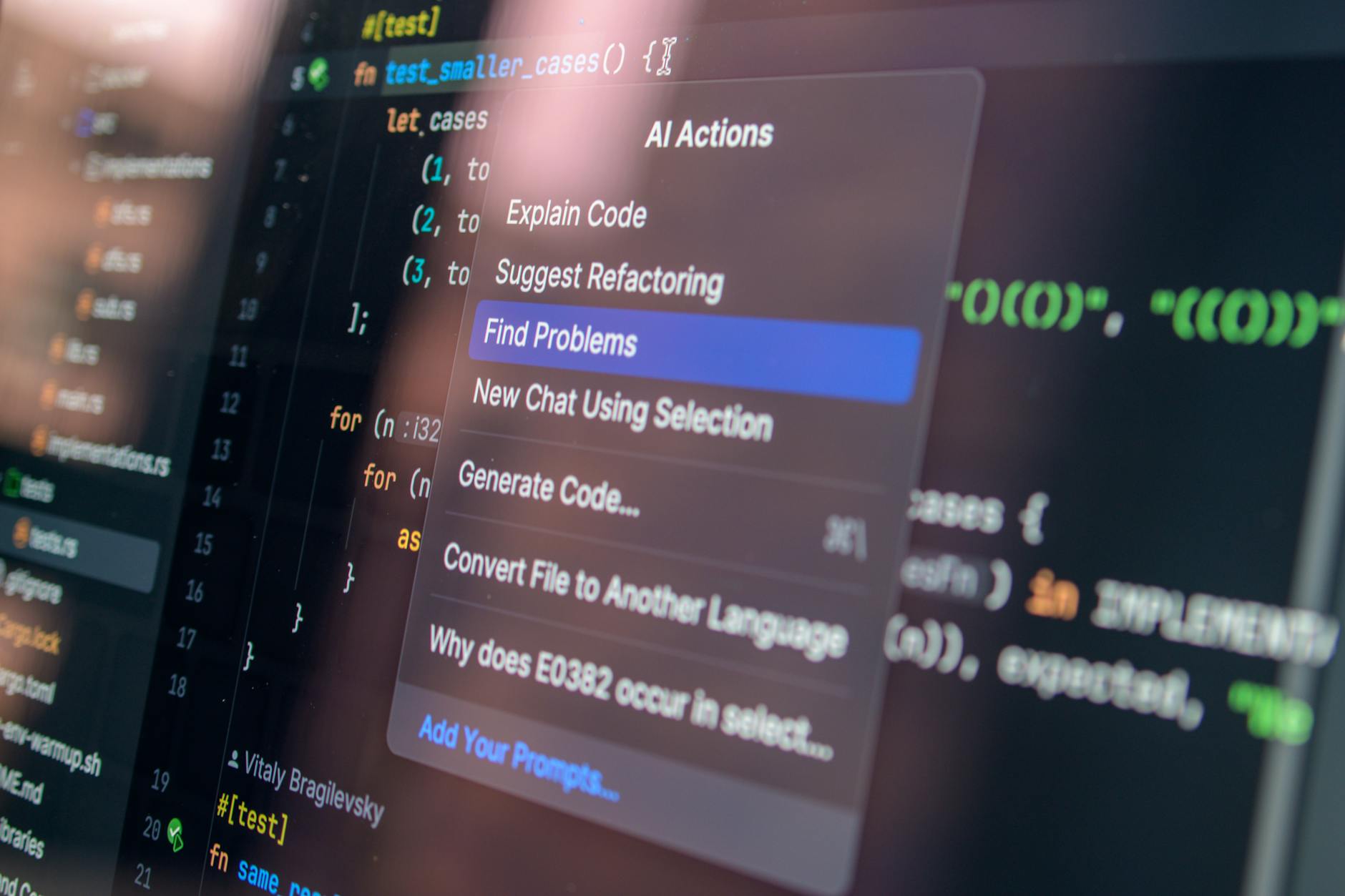

Here’s where it gets worse: those on-device AI models often need credentials. They need API keys to verify licenses, query backend systems, or authenticate updates. And those credentials live in the same memory space as untrusted code.

A compromised NPC behavior model could leak authentication tokens. A poisoned procedural generation system could be used as a vector to exfiltrate player data. Your AI isn’t just degrading—it’s becoming a security liability.

New architectural approaches are emerging to contain the blast radius:

- Sandboxed inference: Run local models in isolated memory spaces, preventing compromised AI from accessing credentials or system resources

- Attestation-based validation: Have the device periodically prove to your servers that the on-device model hasn’t been tampered with, and that it’s still performing within expected accuracy bounds

- Federated monitoring: Collect anonymized telemetry about model performance without exposing raw predictions or player behavior, giving you visibility into data drift without privacy violations

Real-World Example: How Data Drift Could Break Matchmaking

Imagine a competitive game that uses an on-device skill prediction model to estimate player ability for matchmaking. The model was trained on ranked play from six months ago. It works great at first—fair matches, good engagement.

But then the meta shifts. A new character is released and becomes dominant. Players who learn the new character appear as skill outliers. The model, trained on the old meta, doesn’t understand what it’s seeing. It makes wild predictions: some new-character players are ranked too high (they’re just exploiting the new meta), others too low (they’re genuinely skilled but outside the training distribution).

Matches become unfair. Players complain. Your matchmaking system seems broken.

The model didn’t break—it drifted. And because it ran locally with no monitoring, you had no warning signs. You only discovered the problem when player satisfaction metrics tanked.

What You Should Do Right Now

For Developers:

- Implement telemetry logging for on-device AI models, even if it means shipping extra code for observability

- Track model confidence scores and flag when they drop below expected ranges

- Version your models explicitly and implement rollback mechanisms for rapid deployment

- Run A/B tests comparing new model versions against old ones to detect performance degradation before shipping to all players

- Design your models with retraining in mind—even if you can’t update on-device, you should be able to ship new model versions regularly

For Security Teams:

- Audit all on-device AI implementations and catalog where they run and what data they access

- Establish performance baselines for each model and set alerts for drift detection

- Implement sandboxing or process isolation for sensitive AI systems

- Require attestation mechanisms so you can verify on-device models haven’t been tampered with

- Work with game teams to implement federated monitoring—you need visibility without sacrificing privacy

For Leadership:

- Budget for ongoing AI model maintenance, not just initial development

- Treat on-device AI as infrastructure that requires monitoring and updates, not as a fire-and-forget implementation

- Establish SLOs (Service Level Objectives) for AI model accuracy and set up alerting when they’re violated

- Plan for model retraining cycles—quarterly at minimum for competitive games, more frequently for security-critical systems

The Bottom Line

On-device AI inference is here to stay. It’s the right architectural choice for responsive, low-latency gaming experiences. But it creates a monitoring blind spot that most teams haven’t addressed.

Data drift is silent. Your models are degrading right now, and you probably can’t see it. Start building visibility into your on-device AI systems before that blind spot becomes a security incident.

FAQ: On-Device AI and Data Drift in Gaming

Q: Do all game AI systems suffer from data drift?

A: Any AI model that encounters real-world data different from its training data can experience drift. In gaming, this includes NPC behavior systems, procedural generation, difficulty adaptation, anti-cheat detection, and matchmaking algorithms. The key difference is whether you have monitoring to detect it.

Q: How quickly does data drift typically occur in games?

A: It depends on how quickly the game environment changes. In competitive games with frequent balance patches and meta shifts, drift can become noticeable within weeks. In single-player games, drift might take months or longer to surface. Without monitoring, you won’t know until players report issues.

Q: Can I fix data drift without updating my game?

A: Not really. Data drift means your model is encountering situations it wasn’t trained for. The only true fix is retraining on new data and shipping a model update. You can mitigate by adding fallback logic or safety constraints, but that’s treating the symptom, not the disease.

Q: Are cloud-based AI systems immune to data drift?

A: No, but they’re easier to monitor and fix. Cloud systems generate telemetry showing you when drift is occurring, and you can retrain and redeploy without requiring a game update. On-device systems have the opposite problem—no visibility, but no dependency on servers.

Q: Should we move all AI to the cloud to avoid data drift?

A: Not necessarily. Cloud AI has its own problems: latency, server dependency, privacy concerns. The real answer is hybrid: use cloud-based monitoring and retraining for your models, but keep inference on-device for performance. Implement attestation and telemetry so you get the benefits of both approaches.

Q: How do I know if my game’s AI is experiencing data drift right now?

A: Look for:

- NPC behavior that seems increasingly predictable or exploitable

- Difficulty systems that stop adapting to player skill

- Matchmaking that produces increasingly unbalanced games

- Procedural generation that creates more broken or unplayable content over time

- Anti-cheat systems that miss obvious exploits

If you’re seeing these patterns, implement monitoring to confirm. If you don’t have monitoring, that’s your first sign something’s wrong.