Claude, OpenClaw and the New Reality: AI Gaming Chaos

Disclosure: As an Amazon Associate, Bytee earns from qualifying purchases.

The gaming industry is currently standing at the edge of a silicon-based tectonic shift. For years, “AI” in gaming was nothing more than a glorified set of scripted “if-this-then-that” behaviors. Your enemies in Halo or the shopkeepers in Skyrim were essentially puppets following a predetermined script. But today, the conversation has moved from scripted logic to autonomous agents. When we talk about Claude, OpenClaw and the new reality, we aren’t just talking about chatbots—we are talking about a fundamental shift in how digital worlds are built, played, and populated.

The Rise of Autonomous Agents: Beyond Scripted NPCs

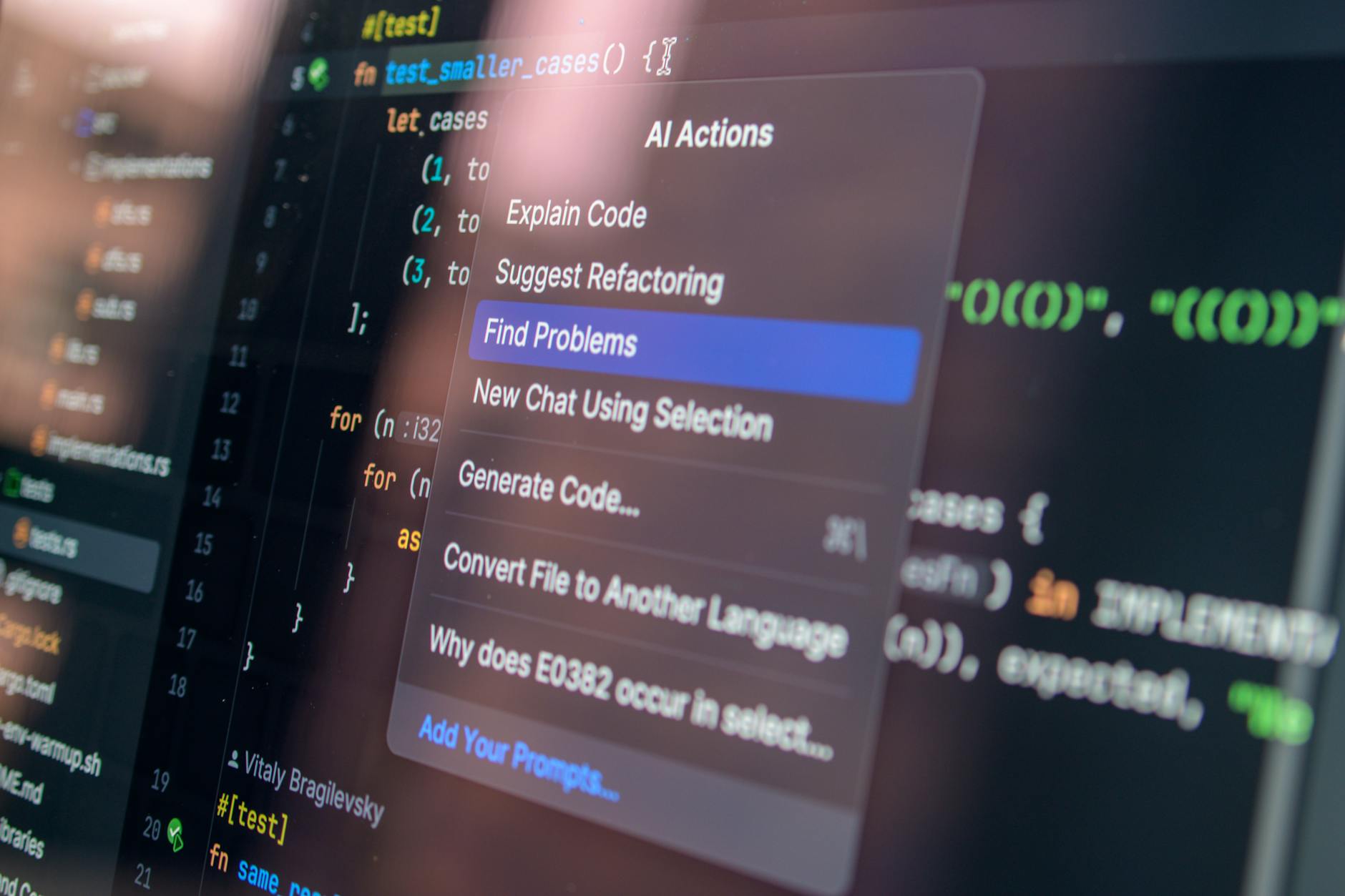

The gaming landscape is currently being redefined by agents that can “think” on their feet. Recent breakthroughs, such as the ability for AI agents to rewrite their own skill sets without the need for resource-heavy retraining, mean that NPCs are becoming increasingly unpredictable. Imagine a scenario in a tactical RPG like Baldur’s Gate 3 where an enemy AI doesn’t just check a table of moves, but adapts its combat strategy in real-time based on your team’s unique build, effectively “learning” how to counter your playstyle mid-encounter.

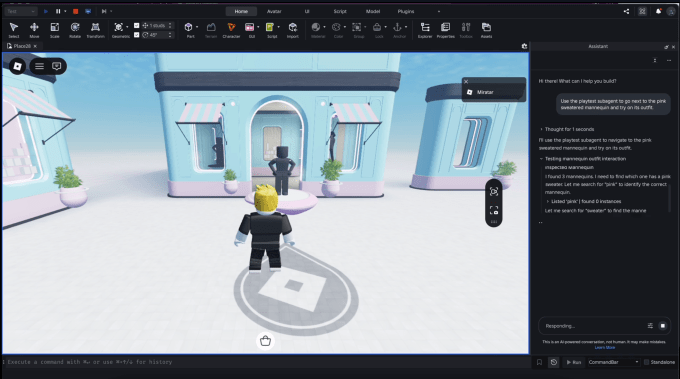

This is where frameworks like OpenClaw come into play. While gamers are familiar with the conversational capabilities of models like Claude, the integration of these models into game engines creates a new level of complexity. We are moving toward a future where developers can offload the “personality” of an NPC to an LLM, while the “logic” of the game world is handled by specialized agents that manage physics, loot drops, and environmental interaction.

The Infrastructure Revolution: How LLMs and Agents Change Dev Pipelines

It isn’t just about what happens on your screen; it’s about what happens behind the scenes. Industry giants are doubling down on this infrastructure. Amazon’s recent integration of S3 Files for AI agents—providing a native file system workspace—might sound dry to the average gamer, but it’s a game-changer. By removing the friction between data storage and agent logic, developers can create more reactive, persistent, and “alive” game worlds.

We’ve seen this trend in the broader tech ecosystem, such as Block’s Managerbot, which acts as a proactive agent. When applied to game development, this logic allows for “co-developer” systems. Studios like Landfall (the creators of Totally Accurate Battle Simulator) are even exploring financing models that leverage these new efficiencies. When AI handles the grunt work of asset placement or bug testing, indie developers can focus on the soul of the game, potentially leading to a new golden age of experimental, highly polished indie titles.

The Double-Edged Sword: Chaos and Safety

Of course, the arrival of these agents brings significant risk. Anthropic’s decision to keep their most powerful cyber-security-focused AI models under wraps via Project Glasswing highlights a growing tension: as AI gets better at navigating digital systems, it becomes a target for exploitation. If an agent can “rewrite its own skills,” what happens when that agent is exposed to malicious player input?

We see the industry balancing this with caution. While Meta is pushing forward with models like Muse Spark following the formation of their Superintelligence Labs, other sectors are focusing on child safety, such as Netflix’s new ‘Playground’ app. The chaos is real—game developers are essentially building living ecosystems that they don’t fully understand yet. The goal is to harness the creativity of these models while preventing the “hallucinations” that could break a game’s immersion or ruin its carefully crafted economy.

Industry Shifts and The Human Element

It is worth noting that while AI is the flavor of the month, the industry remains built on human talent. The recent reports of harassment at Halo Studios serve as a sobering reminder that no amount of machine learning can fix a broken corporate culture. Furthermore, the industry continues to mourn the loss of legends like Double Dragon creator Yoshihisa Kishimoto, whose work reminds us that games were, and always will be, a medium of human expression. AI is an instrument, but the composition still requires a human hand.

As we look forward, the synergy between human creativity and AI execution will define the next decade of gaming. Whether it’s the evolution of visual fidelity in titles like Overwatch or the acquisition of specialized tech firms like Cinemersive Labs by Sony, the message is clear: the future is adaptive, intelligent, and deeply, inherently chaotic.

Frequently Asked Questions

What are AI agents in gaming?

Unlike traditional NPCs that follow a set of pre-written instructions, AI agents are autonomous entities capable of reasoning, planning, and adapting their behavior based on the player’s actions in real-time.

Will Claude or similar models replace game writers?

Unlikely. These models act as powerful assistants, capable of generating dialogue or procedural lore, but the vision, structure, and emotional resonance of a game’s narrative still require a human lead designer to guide the process.

What does “Claude, OpenClaw and the new reality” mean for the average player?

It means games will feel less like static environments and more like living, breathing systems. Expect smarter enemies, dynamic world-building, and quests that respond specifically to your choices in ways that feel personalized rather than scripted.

Is AI making games easier or harder to develop?

It’s a mix. It makes the “heavy lifting” (like procedural generation or asset management) much faster, but it adds complexity in terms of QA testing. When an AI can change its own behavior, “breaking” the game becomes much easier for the developers to manage, requiring new, more robust testing frameworks.