AI Agent Credentials Live: Gaming’s New Security Frontier

The Credential Problem in Modern Game Development

Disclosure: As an Amazon Associate, Bytee earns from qualifying purchases.

Game development has fundamentally changed. Studios no longer build everything in isolation. Modern games—especially those with AI-driven features—rely on:

- Cloud-based AI systems for NPC behavior and dialogue generation

- Procedural generation tools powered by machine learning

- Real-time adaptive difficulty systems

- Player behavior analysis and recommendation engines

- Anti-cheat systems using AI pattern recognition

Each of these systems requires credentials to authenticate and communicate. The problem emerges when you consider that game environments often run third-party code—from modders, asset creators, and middleware developers—that may not be fully vetted.

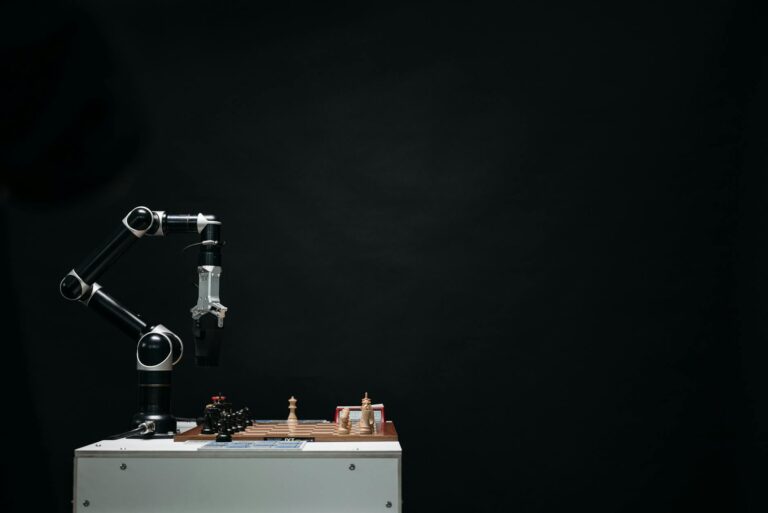

Think of it this way: In Skyrim, when players install mods, they’re running untrusted code within the game engine. Now imagine if that mod could accidentally (or maliciously) access the credentials that authenticate the game’s AI NPC behavior system. That’s the vulnerability we’re talking about.

Architecture One: The Isolated Sandbox Model

The first emerging architecture addresses the problem through aggressive isolation. This approach treats AI agent credentials like nuclear material—they live in a completely separate, locked-down container that untrusted code cannot access.

How the Sandbox Works

In this model, AI systems run in their own protected environment with restricted access points. Here’s the breakdown:

The architecture works like this:

- Credential Vault: All AI authentication tokens are stored in an encrypted vault, separate from the main game process

- Containerization: AI agents run in isolated containers with minimal dependencies

- API Gateway: Any request from untrusted code to AI systems must pass through a security gateway that validates permissions

- Audit Logging: Every credential access is logged and monitored for suspicious patterns

The benefit? Even if a mod or piece of untrusted code gets compromised, it can’t exfiltrate AI credentials because they’re literally in a different process space. The blast radius shrinks dramatically.

Architecture Two: The Capability-Based Security Model

The second emerging architecture takes a different philosophical approach: instead of hiding credentials entirely, it implements granular permission systems called “capability-based security.”

Granular Permissions in Action

Rather than giving AI agents blanket access with a single credential, this model breaks permissions into specific capabilities. An NPC behavior system might have these capabilities:

read:npc_state— can read NPC status and positionwrite:npc_movement— can issue movement commands onlyread:player_location— can sense player positionno:access_inventory— cannot access player inventory systems

This is similar to how OAuth works in web applications, but applied to game architecture. When untrusted code requests access to AI systems, it receives only the specific capabilities it needs—not a master key.

Why This Matters for Game Security

Capability-based security creates a “principle of least privilege” environment. Even if untrusted code gains some level of access, it can only do specific things. The blast radius isn’t zero, but it’s dramatically limited. You’re not choosing between “full access” and “no access”—you’re choosing exactly what access is necessary.

The Real Impact on Gaming

You might be wondering: “Why should I care about this as a gamer?” The answer is that security vulnerabilities in AI systems affect the entire player experience.

Exploitation and Unfair Advantages

If AI agent credentials are exposed, cheaters could potentially manipulate game systems in ways that impact everyone. Imagine if Valorant‘s anti-cheat AI system credentials were compromised—players could exploit vulnerabilities in real-time detection, ruining competitive integrity for legitimate players.

Data Privacy

Many modern games use AI to analyze player behavior for personalization and recommendations. If credentials controlling that AI system are exposed, sensitive player data becomes vulnerable. This includes playstyle patterns, skill levels, and behavioral analytics.

Service Disruption

AI systems running on cloud infrastructure often require continuous authentication. If credentials are compromised, hackers could disrupt these services, causing NPCs to malfunction, procedural generation to fail, or entire game modes to become unplayable.

Industry Context: Why This Matters Now

The urgency around AI credential security in gaming is increasing for several reasons:

AI is becoming standard infrastructure. It’s no longer a novelty feature. Games like Minecraft are integrating AI helpers, and procedural generation tools powered by machine learning are becoming industry standard. As AI becomes more critical to core gameplay, protecting its infrastructure becomes critical.

Modding ecosystems are expanding. Games like Skyrim, Fallout 4, and Baldur’s Gate 3 have thriving modding communities. While this creates amazing player experiences, it also means untrusted code is running at scale within game engines.

Cloud gaming demands new approaches. Services like Xbox Game Pass, PlayStation Plus, and cloud-based gaming require different security models than local-only games. AI systems that run server-side need more sophisticated credential management.

Hybrid Approaches: The Future

Smart game developers aren’t choosing between these two architectures—they’re combining them. A hybrid approach might work like this:

- Sensitive AI systems (like anti-cheat or player data analysis) run in isolated containers with strict credential vaults

- Less sensitive systems (like NPC dialogue or ambient behavior) use capability-based security with granular permissions

- All inter-system communication passes through monitored API gateways

- Regular security audits and penetration testing validate the entire system

This balanced approach gives developers flexibility while maintaining security. It’s what studios like Ubisoft and EA are implementing for their large-scale multiplayer games.

What Gamers Should Know

As a player, here’s what matters:

- Choose reputable mods: Mods from trusted creators are less likely to exploit AI system vulnerabilities

- Keep games updated: Studios regularly patch credential-related vulnerabilities when discovered

- Use official channels: Games downloaded from official sources (Steam, Epic, console stores) have security vetting that unofficial sources don’t

- Report suspicious behavior: If AI systems start behaving strangely, report it to developers—it might indicate a security issue

FAQ: AI Agent Credentials and Game Security

What exactly are AI agent credentials in games?

AI agent credentials are digital authentication tokens that allow AI systems to access other game systems. Think of them like security passes in a building—they prove that an AI system has permission to read player data, control NPCs, or access procedural generation tools. If someone steals those passes, they can impersonate the AI system and access things they shouldn’t.

Can hackers really steal credentials from my game?

In poorly designed systems, yes. If credentials are stored in accessible locations or transmitted without encryption, they’re vulnerable. This is why the architecture improvements we discussed matter—they make credential theft much harder and less profitable for attackers.

How does this affect single-player games vs. multiplayer games?

Multiplayer games are at higher risk because credentials often control systems that affect many players simultaneously. A compromised credential in Valorant affects everyone in a match. Single-player games like Elden Ring have less exposure, but they’re still vulnerable to mods that might exploit local AI systems.

Why don’t game companies just encrypt everything?

Encryption is part of the solution, but it’s not complete. Credentials still need to be used—decrypted and transmitted—which creates windows of vulnerability. The architectures discussed in this article add layers of protection beyond just encryption.

Is this why some games have strict anti-cheat systems?

Partially, yes. Anti-cheat systems often run with elevated privileges to detect when credentials or AI systems are being tampered with. Games like Valorant use kernel-level anti-cheat partly to protect the credential systems that run their AI-driven security features.

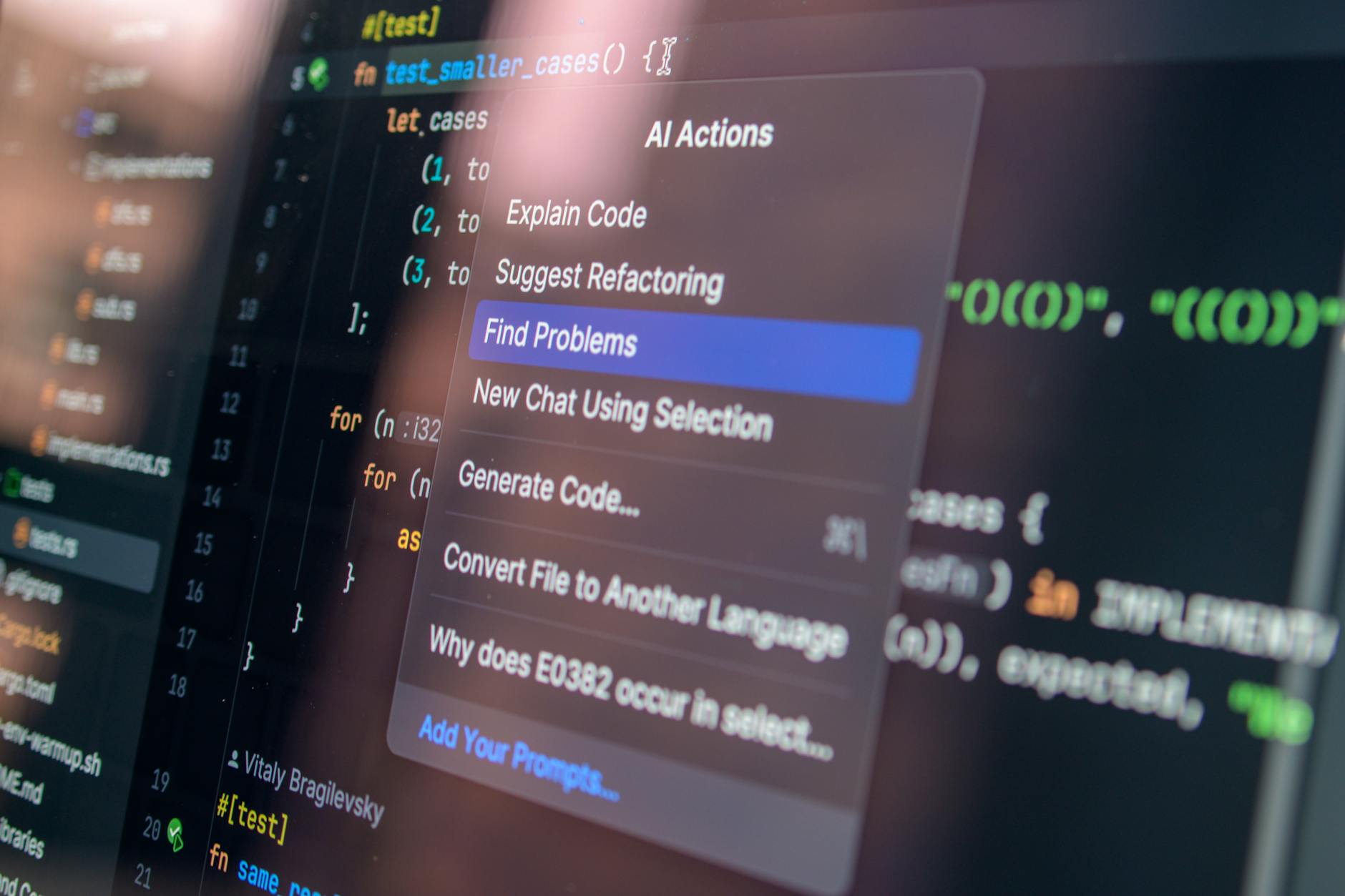

What should indie developers do about this?

Indie developers should use established game engines (Unreal, Unity, Godot) that implement security features out of the box. When integrating cloud-based AI services, use official SDKs that handle credential management securely rather than building custom solutions.

Are there gaming examples where this went wrong?

While specific breach details are rare in public disclosures, security researchers have found vulnerabilities in how game engines handle API credentials for cloud AI services. The industry is learning from these issues and implementing better practices.

How does this relate to AI agents becoming more autonomous?

As AI agents become more autonomous—making decisions, accessing more systems, and running more complex behaviors—their credentials become more valuable and more dangerous if compromised. The more powerful an AI agent is, the more carefully its credentials need to be protected.