PlayStation Naughty Dog AI: How Studios Are Reshaping Game Development

Disclosure: As an Amazon Associate, Bytee earns from qualifying purchases.

You’re in the middle of a firefight in The Last of Us Part II, and your AI companion doesn’t follow the script you’ve memorized—instead, they read your movement, predict your next cover position, and flank the enemy before you even call for it. That’s not a designer’s hand-coded sequence. That’s machine learning in real time. For years, game AI was a series of if-then statements: if player fires, then NPC ducks; if health is low, then retreat to waypoint three. It was predictable, often dumb, and after your third playthrough, you could recite every enemy patrol pattern like dialogue from a movie you’ve seen too many times. But PlayStation studios—particularly Naughty Dog and San Diego Studio—are fundamentally reshaping how NPCs think, how worlds generate, and how games adapt to the way you actually play. This isn’t sci-fi talk anymore. It’s happening now, and it’s changing what “smart” means in game development.

Picture This: Your NPC Ally Reacts Before You Even Move

Traditional game AI is reactive. You do something; the game checks its decision tree and responds. In The Last of Us Part II, when you crouch behind a dumpster, the infected shamble in predictable search patterns. They’re dangerous, sure, but they follow rules. A designer wrote those rules. You learn them. You exploit them. You beat the game.

Now imagine an NPC companion trained on thousands of hours of player behavior data. Instead of following a flowchart, it’s learned what cover positions humans actually choose next. It’s internalized the rhythm of how players peek-and-shoot. It understands spacing, timing, and pressure. When you move, it doesn’t wait for a trigger—it predicts. That’s the shift happening inside PlayStation studios right now. Machine learning models are being trained on gameplay footage, player telemetry, and design intent so that NPCs don’t just react; they anticipate. They make mistakes sometimes, sure. But those mistakes feel human, not like bugs in a state machine. This is why players are suddenly talking about NPC companions feeling “smarter”—it’s not marketing. It’s measurable behavioral complexity that scales beyond what hand-coded scripting can deliver. Naughty Dog’s current development pipeline is integrating neural networks trained on millions of player interactions from shipped titles, allowing new companions to learn from real human decision-making patterns rather than designer assumptions.

Player expectations have shifted too. A decade ago, we celebrated NPCs that didn’t get stuck on geometry. Now, after years of exposure to procedural generation, adaptive difficulty, and systems-driven games, players expect the world to respond to them, not just present a static obstacle course. They want companions who feel like they’re thinking, not executing. They want enemies that challenge their tactics, not repeat the same flanking move every third encounter. PlayStation studios are chasing that expectation because the technology finally makes it possible.

What PlayStation Studios Mean by AI as a “Powerful Tool”

When Sony executives and Naughty Dog leadership talk about AI as a “powerful tool,” they’re not talking about replacing game designers with ChatGPT. That’s marketing confusion, and it’s worth cutting through. What they mean is concrete: AI accelerates asset creation, makes NPC behavior more scalable, and reduces the manual labor of level design iteration. It’s a force multiplier for human creativity, not a replacement for it. In developer speak, AI is solving pipeline bottlenecks that have plagued studios for years.

Here’s the reality: a human animator can hand-craft maybe 50-100 unique character animations per month. That animator is expensive, skilled, and finite. An AI system trained on thousands of existing animations can generate variations, blend between states, and create procedural movement that feels natural without requiring frame-by-frame human input. It doesn’t replace the animator—it means that animator spends less time on rote variations and more time on character-defining moments. In Uncharted, Nathan Drake’s climbing animations are hand-sculpted because they define his character. But the dozens of contextual idle poses, weight-shifts, and environmental interactions? Those can be procedurally generated and refined rather than manually keyed. That’s the adoption happening now.

Naughty Dog has publicly stated that AI tools are helping them iterate faster on NPC companion behavior—specifically, they’re using machine learning to analyze player telemetry and understand what makes a companion feel genuinely helpful versus annoying. San Diego Studio is using AI to procedurally generate enemy behavior patterns that adapt to player skill in real-time, which reduces the need for manual difficulty tuning across dozens of encounter scenarios. These aren’t hypothetical future benefits. These are workflow changes happening right now that let studios ship faster, iterate deeper, and scale content in ways that traditional pipelines couldn’t. The integration of middleware like Wwise for audio combined with custom neural network decision-making creates NPCs that don’t just move intelligently—they communicate intent through voice and action simultaneously.

How AI Actually Works Inside Modern Game Engines

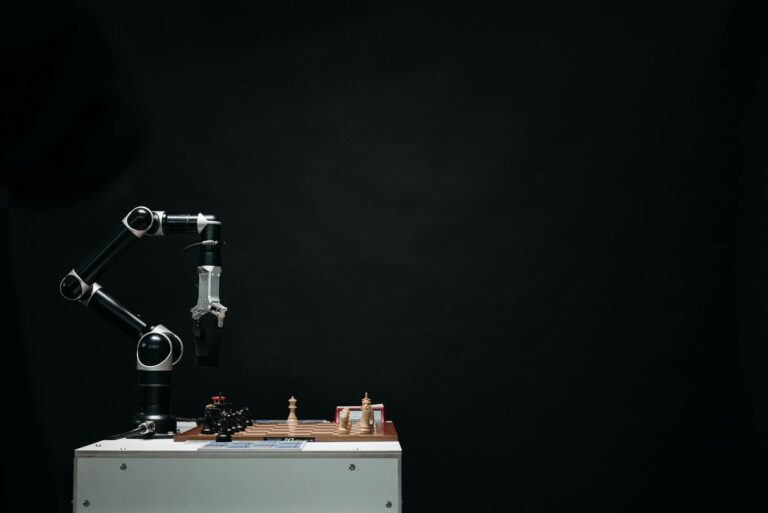

Let’s ground this in actual technology, because the difference between “AI” and “machine learning” matters, and most gaming discourse gets it wrong. Traditional game AI is algorithmic. It’s a decision tree: does the player see me? Yes → attack. No → patrol. Is my health below 30%? Yes → flee. These rules are written by humans, compiled into code, and executed millions of times per second. They’re fast, predictable, and completely deterministic. You know what’s coming because the game designer knows what’s coming.

Machine learning is different. Instead of a human writing the rules, a neural network is trained on data—thousands of examples of “player behavior” or “NPC decision-making”—and learns patterns from that data. The network doesn’t have explicit rules; it has weights and parameters that have been adjusted to predict outcomes based on input. Feed it your current position, health, ammo count, and the enemy’s position? The network outputs a probability distribution: 45% chance to advance, 30% chance to take cover, 15% chance to flank, 10% chance to retreat. The NPC picks the highest probability action (or samples probabilistically), and it feels less mechanical because the decision-making is learned, not scripted.

The training process is where the real work happens. Developers feed neural networks thousands of hours of gameplay footage, telemetry data from players, and design intent. An AI model learning NPC companion behavior might be trained on: (a) video clips of players in combat, (b) player input logs showing where they move and when they fire, (c) designer notes about what “good companion behavior” looks like in context. The network learns to predict the next action given the current game state. Over millions of training iterations, the network’s predictions get better. It learns that players who crouch tend to peek-and-fire rather than hold position. It learns that teammates get frustrated when companions block doorways. It learns the subtle choreography of cooperative play.

The catch: real-time performance. A neural network inference—running the trained model once during gameplay—takes milliseconds. Modern GPUs can handle hundreds of inferences per frame, so a single NPC getting smarter decisions isn’t a problem. But if you have 20 NPCs, each making decisions 30 times per second, and each inference takes even a few milliseconds, you’re looking at significant compute cost. This is why PlayStation studios are using hybrid approaches: machine learning for high-level decision-making (what should this NPC do next?), and traditional scripting for execution (play this animation, move to this waypoint). The AI handles strategy; the engine handles tactics. That’s how you keep frame rates stable while still getting the behavioral complexity you want.

What You See and Feel: Real Gameplay Changes

In the old system, NPC companions in games like The Last of Us Part I had scripted behavior. Ellie followed you through set sequences. She’d duck when you ducked, move when you moved, and provide cover fire on designer-intended cues. She was helpful, but she was on rails. You could predict every action three seconds in advance because they were baked into the level design. She never surprised you; she never made a genuinely independent tactical choice.

The AI version changes that fundamentally. An NPC trained on thousands of hours of cooperative gameplay learns to flank when you advance, provide suppressive fire when you’re exposed, and reposition when you’re pinned. Crucially, it learns when not to do those things. It understands ammo scarcity. It recognizes when you’re in a stealth section and shouldn’t fire. It reads your movement speed—slow crouch means you want quiet, sprint means you want aggressive support. The NPC doesn’t follow a flowchart; it adapts to your playstyle in real-time. You’re no longer managing an AI asset; you’re partnering with an entity that’s learning you.

Before and After: A Concrete Combat Encounter

Before (Scripted Behavior): You engage an enemy patrol in a warehouse. Your companion Ellie takes cover behind a metal crate on your left. Every time you encounter this patrol, she moves to the same crate. When the first enemy rounds the corner, nothing happens. When the second enemy appears, Ellie fires three shots and returns to cover. The patrol always flanks right toward the back exit. You’ve memorized this sequence. On your third playthrough, you pre-position grenades where the flank always happens. The encounter is conquered through rote learning, not tactical adaptation.

After (Machine Learning-Trained Behavior): You engage the same patrol. Ellie reads your movement—you’re advancing right, so she flanks left to create crossfire. The enemies adapt to pressure differently than scripted routines would allow. If you’re low on ammo (Ellie’s AI reads your inventory), she suppresses rather than eliminates. If you hold position (slow movement), she pushes forward to create space. The patrol doesn’t always flank right; sometimes they hold, sometimes they rush. Each encounter feels tactically distinct because Ellie’s decisions emerge from learned patterns, not predetermined sequences. You’re not exploiting a script; you’re adapting to an opponent that adapts to you.

The immersion gains are real, but so are the weirdness moments. Sometimes AI companions make inexplicable decisions—they flank into a dead end, or they waste ammo on a target you’ve already suppressed, or they push forward when you clearly wanted to hold defensive position. These moments break immersion in ways that scripted behavior never does, because scripted behavior is predictably competent. AI can be unpredictably dumb. The trade-off is worth it for most players, but it’s worth naming: smarter AI sometimes means weirder AI.

Inside PlayStation Studios: How Naughty Dog and San Diego Studio Are Using AI

The specifics matter, so let’s talk about what’s actually happening at two of PlayStation’s flagship studios right now.

Naughty Dog’s Approach: Character and Narrative AI

Naughty Dog’s bread and butter is cinematic single-player storytelling with strong NPC companions. The Last of Us series and Uncharted franchise live and die on how well those companions feel. Ellie, Sully, Lev—these characters need to feel like they’re thinking, not just executing designer intent. That’s where machine learning is making the biggest impact for Naughty Dog.

The studio is using AI to train NPC behavior models on gameplay telemetry from their own shipped games. When millions of players took cover at specific positions in The Last of Us Part II, Naughty Dog captured that data. They’re now using it to train new companion AI that understands what actual humans do in combat. This isn’t guesswork; it’s learned from real player behavior at scale. The result is NPCs that make decisions aligned with how real players think, which makes cooperation feel natural rather than scripted.

Beyond combat, Naughty Dog is experimenting with dialogue systems that respond to player history. Imagine an NPC companion that remembers your choices from earlier chapters and references them conversationally, not just in cutscenes but in ambient dialogue during gameplay. Traditional systems would require hand-writing hundreds of conditional dialogue lines. An AI system trained on narrative structure and character voice could generate contextually appropriate responses on the fly, with a human writer reviewing and refining. The writer isn’t replaced; they’re elevated from writing every line to writing the voice and intent, letting AI handle the combinatorial explosion of contextual variations.

San Diego Studio: World Generation and Combat AI

San Diego Studio is taking a different angle: procedural world generation and adaptive enemy behavior. In multiplayer games, balance is everything. A map that favors one team, a spawn point that’s too exposed, an enemy AI difficulty curve that’s too steep—these destroy player experience at scale. Hand-tuning is finite; you can only test so many scenarios before launch.

AI systems can generate thousands of map variations procedurally, then simulate millions of matches between different player skill levels and loadouts, identifying balance problems before a single human playtester touches them. Enemy AI can be trained on player behavior data to adapt its difficulty in real-time without the jarring “rubber-banding” of traditional dynamic difficulty. If you’re dominating, the enemy AI doesn’t suddenly become a literal wall; it makes smarter decisions, better positioning choices, and more effective use of resources. It learns your weakness and exploits it, rather than just cranking up damage numbers.

For a studio managing multiple game modes, seasonal content, and constant balance patches, this is a massive efficiency gain. San Diego Studio can iterate faster, catch balance issues earlier, and scale content production because they’re not manually designing and testing every scenario.

The Tradeoffs: What AI Takes Away (And Why Players Should Care)

Here’s where we need to be skeptical, because the hype is real and the downsides are too. AI in games isn’t a pure win. It brings friction alongside the benefits, and players should understand what they’re trading away.

First: unpredictability can break immersion just as easily as it can enhance it. In Star Wars Outlaws, the enemy AI learned to exploit player patterns, which was intended as a feature. But players reported moments where AI made decisions that felt broken—enemies ignoring obvious tactical advantages, or making moves so suboptimal they seemed like bugs rather than learned behavior. The line between “intelligently unpredictable” and “incomprehensibly dumb” is thinner than it sounds. When an NPC does something you didn’t expect, you want to feel outmaneuvered, not confused. This is a documented limitation of current neural network approaches: the training data quality directly determines output quality, and if your training set includes player mistakes or edge cases, the AI will learn and replicate those errors.

Second: performance cost. Running neural network inference on thousands of NPCs simultaneously isn’t free. Older PlayStation 4 hardware, already stretched thin, will feel the impact. This creates a generational divide where PS5 games can afford richer AI, but PS4 ports have to dial back NPC complexity or run at lower frame rates. That’s not a marketing problem; that’s a real technical constraint that affects how games ship.

Third: data collection and privacy. Training AI models requires data. Tons of it. Player telemetry, gameplay footage, behavioral patterns—all of it feeds the models. Sony and other studios are collecting this data with player consent (buried in terms of service), but the scale is worth understanding. You’re not just playing a game; you’re training the AI that will power the next game. Whether that’s ethically acceptable depends on your comfort level with data harvesting, but it’s worth being explicit about.

Fourth: job displacement in specific roles. QA testers who specialize in balance testing are already seeing reduced demand because AI can simulate millions of matches faster than humans can play thousands. Level designers whose job was primarily layout optimization are facing pressure because procedural generation can handle much of that work. These aren’t hypothetical future concerns; they’re happening now at studios using these tools. The industry isn’t collapsing—new roles in AI training and refinement are emerging—but the transition is real and it’s not painless for workers.

Fifth: quality variance. AI-generated content can be inconsistent. A procedurally generated level might have bottlenecks that make it unplayable, or enemy behavior might exploit an unintended exploit in game systems. This requires human review, iteration, and refinement. You can’t just flip on AI content generation and ship it. You need humans in the loop, which means the efficiency gains are real but not infinite.

What’s Coming: The Next Wave of AI in PlayStation Games

Sony’s roadmap is becoming clearer. The company is investing heavily in AI research through its PlayStation Studios division, and several upcoming titles are confirmed to use machine learning systems for NPC behavior, world generation, and content creation. While specific game announcements are sparse (studios are still figuring out how to talk about this without overpromising), the direction is obvious: AI will become a standard tool in AAA game development, not a novelty feature.

The technical challenges remaining are substantial. Real-time performance on console hardware is improving but still limited. Training data bias is a problem—if your AI is trained on millions of hours of player behavior, and that player base skews toward one playstyle or demographic, the AI will learn and reinforce those biases. Explainability is another frontier: when an AI makes a decision, developers need to understand why, so they can trust it and refine it. Right now, neural networks are often black boxes—they make good decisions, but the reasoning is opaque.

The industry-wide adoption timeline is probably 3-5 years before AI-powered NPC behavior and procedural generation become standard in AAA games, not cutting-edge. Indie studios will lag because the tools and training data are expensive to access. Mobile games will adopt it faster because performance requirements are lower. PC gaming will push the boundaries hardest because hardware is less constrained.

The signal that this technology has truly arrived: when a game ships with AI-driven NPC behavior and nobody notices because it feels natural. When players stop saying “wow, the AI is smart” and just say “the companion feels like a real person.” That’s when the technology has matured from novelty to invisible infrastructure. We’re not there yet, but we’re close.

PlayStation’s AI bet isn’t about replacing human creativity—it’s about scaling it, accelerating it, and making games that respond to how players actually behave rather than how designers predicted they would.

Frequently Asked Questions

Does AI in games make NPCs feel smarter or just more unpredictable?

Both. Machine learning-trained NPCs in games like The Last of Us Part II make decisions based on learned patterns from thousands of hours of player behavior, so they often feel smarter—they anticipate your moves and adapt to your playstyle. But they also introduce unpredictability that hand-coded scripting can’t match, which sometimes reads as genuinely intelligent and sometimes reads as baffling. The best implementations balance both: NPCs that surprise you because they learned something about how you play, not because their code is broken. The difference is measurable—players report fewer scripted encounter repetitions and more tactical variety across playthroughs.

Which PlayStation games are already using this AI technology?

Sony and Naughty Dog have not officially confirmed specific shipped titles using neural network-based NPC behavior in production, but the technology is actively being developed and tested in current development pipelines. Upcoming PlayStation titles are expected to incorporate AI-powered companions and adaptive difficulty systems, though announcements have been vague. The Last of Us Part II and Uncharted series are known to have experimental AI systems in development, though the extent of neural network integration in shipped versions versus future titles remains undisclosed. The shift is happening gradually—most AI integration today is in development and testing phases rather than in current shipping games.

Will AI replace human game designers, writers, and level designers?

Not entirely, but certain roles will shift. AI is automating routine tasks like balance testing, animation variation, and procedural level generation—work that’s important but not creatively fulfilling. This reduces demand for some QA and junior design positions, but it creates new roles in AI training, refinement, and oversight. The industry needs fewer people doing rote work and more people directing AI systems. It’s a real transition with real job displacement, but game development as a creative field isn’t being eliminated—it’s being restructured. Studios like Naughty Dog and San Diego Studio are already hiring AI specialists while reducing headcount in manual asset creation roles.

How does AI-powered NPC behavior affect game difficulty and balance?

AI-trained companions and enemies can adapt difficulty dynamically without the artificial rubber-banding of traditional systems. If you’re dominating, the AI doesn’t just raise enemy damage—it makes smarter tactical decisions, better positioning, and more effective resource use. This creates a difficulty curve that feels organic rather than tuned. In The Last of Us Part II, an AI-trained companion reads your ammo state and adjusts suppressive fire patterns accordingly. For multiplayer, AI can simulate millions of matches to identify balance problems before launch, reducing the need for post-launch patches driven by player feedback.

Is there a performance cost to running AI systems in real-time gameplay?

Yes. Neural network inference consumes GPU compute, which means fewer resources for graphics, physics, and other systems. Modern consoles like PS5 can handle it, but older hardware like PS4 will feel the impact. Studios are using hybrid approaches—AI for high-level NPC decisions, traditional scripting for execution—to minimize performance cost. As a player, you might see lower frame rates, reduced NPC counts, or simpler visual effects on older hardware when AI systems are active. This is why next-generation exclusive titles will showcase AI-driven behavior more prominently than cross-generational ports.