Is On-Device AI a Risk? 5 Signs Data Drift Is Hitting Your Game

Disclosure: As an Amazon Associate, Bytee earns from qualifying purchases.

Your development team is running machine learning models on player devices right now. They’re doing it without your security team knowing. And the models are slowly degrading in ways nobody can measure.

The culprit is data drift: the silent degradation of AI model performance when real-world data diverges from training data. In gaming, it’s a ticking time bomb that can compromise player safety, enable cheating, expose player data, and erode competitive integrity—all while staying invisible to security teams.

Why Local AI Inference Is Now Standard (And Why That Matters)

Five years ago, running machine learning on a player’s PC or console was technically risky and computationally expensive. Today, it’s inevitable.

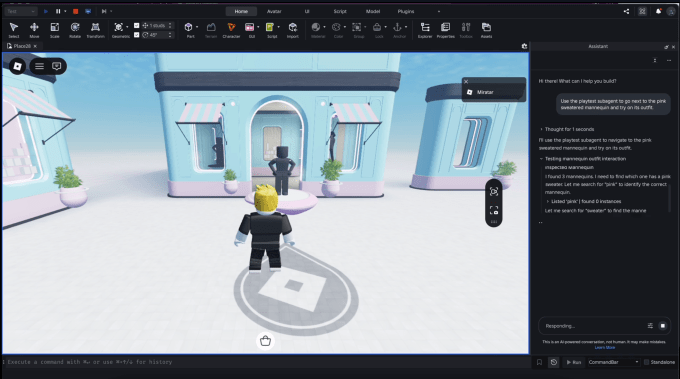

Games like StarCraft II use local neural networks to power opponent AI that adapts to player strategies in real-time. The game doesn’t send every decision to Blizzard’s servers; the computation happens on your machine. Valorant‘s anti-cheat system employs behavioral analysis models that run client-side to catch aimbot patterns faster than server-side detection alone. Cyberpunk 2077 uses procedural generation AI locally to create dynamic NPC behavior, crowd interactions, and environmental storytelling that would be impossible if everything required a round-trip to cloud servers.

The business case is compelling: local inference reduces latency, cuts server costs, improves offline functionality, and creates better player experiences. Game developers love it because it’s efficient. Security teams should hate it because it’s invisible.

The moment you deploy an AI model to a player’s device, you’ve given up direct control over its behavior. You can’t monitor every inference. You can’t log every decision. You can’t patch the model in real-time. And you definitely can’t measure whether the model is still doing what it was trained to do.

What Data Drift Looks Like in Gaming

Data drift happens when the distribution of input data changes over time, causing a model trained on historical data to make progressively worse predictions on current data.

Imagine League of Legends deployed a machine learning model to detect toxic player behavior—trained on chat logs from 2022. By 2024, players have developed new slang, new memes, new ways to harass teammates that the model never saw during training. The model’s accuracy drops. False negatives increase. Toxic players slip through. The system doesn’t flag the degradation—it just silently performs worse.

Or consider an anti-cheat model trained on legitimate player movement patterns. As the game’s meta evolves, legitimate players move differently. New strategies emerge. Mechanical skill improves. What looked like cheating in training data might just be a pro player executing a new technique. The model starts false-flagging legitimate players, creating frustration and eroding trust in the anti-cheat system.

This is happening in real games right now. The difference between a healthy AI system and a broken one is often invisible until the damage is catastrophic.

Five Signs Data Drift Is Already Undermining Your Security Models

Sign 1: Increasing False Positives (Or False Negatives) Over Time

Your anti-cheat system was accurate when you shipped it. Three months later, legitimate players are getting banned at twice the rate. Or toxic players are slipping through your moderation filters with increasing frequency.

This is the clearest indicator of data drift. When model performance degrades asymmetrically—when false positives spike but true positives stay stable, or vice versa—you’re watching a model fail in real-time.

The challenge: most game studios don’t have automated monitoring for this. They wait for player complaints, support tickets, and community outcry. By then, the damage is done. Innocent players have been banned. Bad actors have exploited the gap.

Sign 2: Predictions Cluster Around Certain Player Segments While Failing on Others

Your toxicity detection model works great for English-speaking players in North America but fails catastrophically for players in other regions, languages, or cultural contexts.

This is demographic drift—a subset of data drift where the model performs differently across different population segments. A model trained primarily on North American player data doesn’t understand cultural communication norms in Asia, Europe, or Latin America. It flags normal gameplay as suspicious. It misses actual abuse.

In competitive games like Dota 2, this creates a compounding problem: players in underrepresented regions get worse moderation outcomes, which drives them away from the game, which further reduces training data diversity, which makes the problem worse.

Sign 3: Model Confidence Stays High While Accuracy Tanks

The model says it’s 95% confident in its decision. But it’s wrong more often than it was last quarter.

This is insidious. A well-designed model should express uncertainty when it encounters unfamiliar data. But many models trained with standard techniques don’t do this naturally. They remain confidently wrong—and your security team has no way to know.

In an anti-cheat context, a high-confidence false positive ban is a player support nightmare. In a moderation context, it’s a harassment lawsuit waiting to happen.

Sign 4: Edge Cases and Rare Events Spike in Frequency

Your model performs great on common scenarios—typical ranked matches, standard chat interactions, normal player behavior. But unusual situations—new game modes, seasonal events, limited-time mechanics—cause the model to fail spectacularly.

When Valorant introduces a new agent, or Overwatch 2 launches a limited-time event, the player behavior distribution shifts. Movement patterns change. Ability usage patterns change. Communication patterns change. Models trained on pre-event data haven’t seen this distribution before.

If you’re not actively monitoring for this, your anti-cheat or moderation systems become unreliable precisely when you need them most—when the game is evolving.

Sign 5: You Have No Automated Monitoring for Model Performance in Production

This is the meta-sign: you don’t know if data drift is happening because you’re not measuring it.

Most game studios deploy AI models and then… stop thinking about them. They don’t track prediction accuracy over time. They don’t monitor input data distributions. They don’t set up alerts for performance degradation. They certainly don’t have processes to retrain models when drift is detected.

If your answer to “How do you know your anti-cheat model is still accurate?” is “Player complaints tell us,” you’re operating blind.

Why Your Security Team Doesn’t Know This Is Happening

Three structural reasons explain why data drift in gaming AI remains a security blind spot:

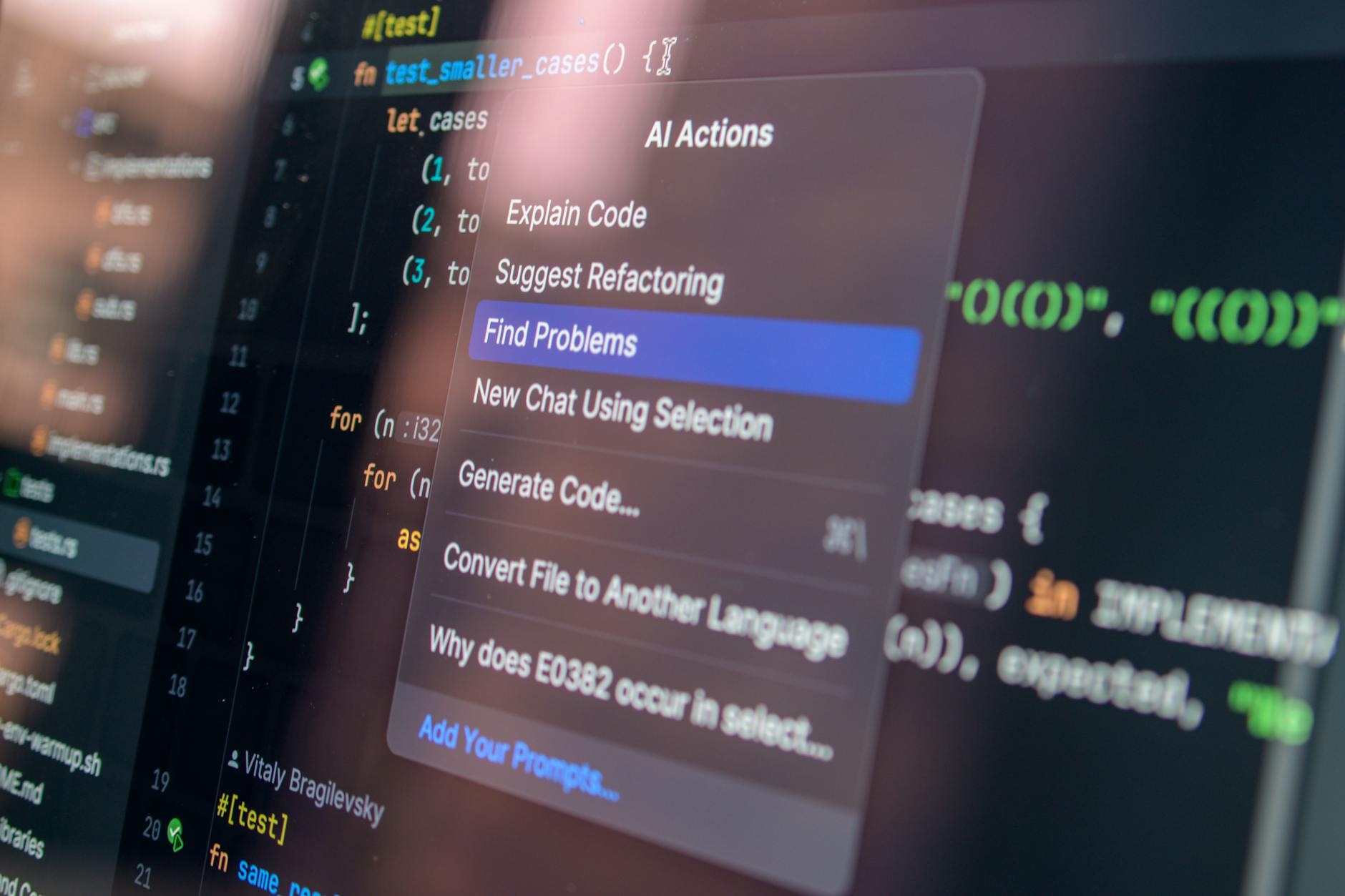

First: Different teams own different pieces. Game developers deploy AI for gameplay features. Security teams worry about network traffic and data protection. DevOps manages infrastructure. Nobody owns “AI model reliability in production,” so nobody monitors it.

Second: Local inference is invisible to traditional security tools. Your SIEM can’t see what’s happening inside a player’s game process. Your threat detection systems monitor network traffic, but they can’t audit the decision-making of a locally-running neural network. The model is a black box running on untrusted hardware with untrusted users.

Third: Gaming culture doesn’t prioritize this yet. The gaming industry has spent twenty years building expertise in anti-cheat systems, moderation infrastructure, and player safety. But those frameworks assume human review, server-side logging, and centralized control. Local AI models violate all three assumptions.

The result: data drift in gaming AI goes undetected until it causes real harm—false bans, missed harassment, compromised competitive integrity, or worse.

What Happens When Data Drift Breaks Your Game

The consequences cascade across three dimensions:

Player Trust: If your anti-cheat system starts banning legitimate players, players leave. If your moderation system stops catching abuse, players feel unsafe. Both destroy community health.

Competitive Integrity: In competitive games, a degraded anti-cheat model is a competitive advantage for cheaters. As the model’s accuracy drops, cheating becomes more viable. Pro players, streamers, and competitive communities notice. The esports ecosystem suffers.

Business Risk: False-positive bans create legal exposure. Missed harassment enables liability. Poor moderation creates PR disasters. And unlike traditional security breaches, these problems often emerge gradually and publicly, in front of your entire community.

Building Detection and Response Systems

The fix requires three layers:

Layer 1: Automated Monitoring. Set up continuous monitoring of model performance metrics in production. Track accuracy, precision, recall, and false positive/negative rates over time. Alert when metrics degrade beyond acceptable thresholds. This requires instrumenting your game code to log predictions and outcomes, then running analysis pipelines on that data.

Layer 2: Demographic Parity Testing. Regularly evaluate model performance across player segments—by region, language, rank, playstyle. Identify where the model performs poorly and why. This catches demographic drift before it creates widespread problems.

Layer 3: Retraining Workflows. Build processes to retrain models when drift is detected. This might mean weekly retraining on recent data, or triggered retraining when performance metrics cross thresholds. The key is automating this—you can’t manually retrain every model in every shipped game.

Teams like those working on Intuit’s tax code implementation have shown that this is possible in regulated industries. Game studios can adapt these workflows to gaming contexts.

The Practical Path Forward

If you’re running AI in shipped games, you need to answer these questions immediately:

1. Which of your AI models are running on player devices?

2. How do you measure their performance in production?

3. What’s your process for detecting and responding to data drift?

4. Who owns AI model reliability in production?

If you can’t answer these questions with confidence, your AI systems are already creating security and operational risk.

The good news: fixing this doesn’t require abandoning on-device inference. It requires treating AI model reliability as a first-class security concern, the way you treat anti-cheat systems, data protection, or network security.

The bad news: you probably have less time than you think. As AI becomes more embedded in games—driving NPC behavior, adapting difficulty, moderating chat, detecting cheating—the blast radius of unmonitored data drift only grows larger.

Frequently Asked Questions

What’s the difference between data drift and model drift?

Data drift occurs when the distribution of input data changes—players behave differently, communicate differently, or play differently than they did during training. Model drift (or concept drift) occurs when the relationship between inputs and outputs changes—what constitutes cheating evolves, or what constitutes toxic behavior shifts. Both degrade model performance; both require monitoring.

How often should I retrain my gaming AI models?

It depends on how quickly your game’s data distribution changes. Fast-moving games like League of Legends (with constant balance changes and meta shifts) might need weekly retraining. Slower-moving games might retrain monthly or seasonally. The key is monitoring performance and retraining when metrics degrade, not on a fixed schedule.

Can I deploy a gaming AI model and never update it?

Technically yes. Practically no. Any AI model deployed to a live game will eventually encounter data it wasn’t trained on. Performance will degrade. The question is how long until that degradation becomes noticeable to players and causes problems.

What tools can I use to monitor AI model performance in games?

Standard ML monitoring tools like Evidently, WhyLabs, and Fiddler can work with gaming data. You’ll need to instrument your game to log predictions and outcomes, then send that data to a monitoring system. Some game engines (Unreal, Unity) have plugins for this. For smaller teams, custom dashboards using standard data tools (Python, SQL, Grafana) work fine.

Is on-device AI inference secure?

Local inference doesn’t inherently solve security problems—it creates different ones. You lose visibility into model behavior, but you also reduce latency and server costs. The key is understanding the tradeoffs and monitoring for degradation. A secure system requires both local inference AND robust monitoring, not one or the other.

How does data drift relate to cheating?

As anti-cheat models drift, they become less effective at detecting cheating. This creates a window where cheaters can operate without detection. Conversely, as models drift away from legitimate player behavior, they false-flag legitimate players. Both outcomes undermine competitive integrity.