Indika Developer AI Game Funding: How $5M Shapes Narrative AI

Disclosure: As an Amazon Associate, Bytee earns from qualifying purchases.

You’re mid-conversation with an NPC in a game, and they suddenly reference a choice you made three hours ago in a completely different quest—not because a programmer hardcoded it, but because the AI actually remembered you. They don’t just acknowledge it; they weave it into their dialogue naturally, with emotional weight that matches the tone of that earlier decision. This isn’t a scripted branching moment. This is a system that’s learned who you are as a player and is responding in real time. That’s the promise sitting behind the Indika developer’s freshly announced $5 million AI game funding round—and it’s reshaping what indie narrative games can actually do.

The $5M Question: Why Indika’s Developer Is Betting Big on AI for the Next Game

Indika landed in early access in 2023 and immediately became the indie darling that proved a small studio could craft a narrative experience with the emotional resonance of a AAA title. The game’s hand-authored story, morally gray characters, and player agency in dialogue choices created a cult following that translated into critical acclaim and real commercial success. But here’s the thing: even Indika’s brilliance came with the constraints that have plagued handcrafted narratives since game writing became a discipline. Every dialogue branch had to be written. Every NPC reaction to player choices had to be scripted. Every moment of continuity—remembering that you sided with Character A over Character B—required a developer to anticipate that choice and write for it.

The $5 million funding announcement signals something much bigger than one studio’s next project. It’s a public bet that narrative AI has crossed a threshold where it’s no longer a novelty or a cost-cutting measure, but a genuine creative multiplier for indie developers. The gaming industry has watched Baldur’s Gate 3 prove that players will spend hundreds of hours in games where their choices feel like they matter. But Baldur’s Gate 3 required Larian Studios to employ entire rooms of writers and narrative designers working within Larian’s proprietary dialogue system. What if a five-person indie studio could approach that depth using commercial AI platforms like Inworld AI or Convai? That’s what this funding is really asking.

Player expectations have shifted post-Indika in ways that directly benefit this bet. Audiences now expect indie games to match AAA emotional sophistication. They want NPCs that feel like people, not dialogue trees. They want their choices to ripple through the world in ways that feel authored, not random. And they want that at indie price points and indie development timelines. Narrative AI—specifically systems that can generate contextual dialogue, remember player history, and build NPC personalities that feel coherent—is the only way a small team scales that ambition without burning out their writers in six months. Inworld AI’s memory systems and Convai’s voice-enabled NPC architecture are already proving this works in controlled environments.

What Indika Did Right: The Foundation for AI-Enhanced Storytelling

Before we talk about what AI can add, let’s be clear about what Indika nailed with pure human craft. The game’s strength isn’t technical; it’s emotional. Every major character feels like a person with motivations, contradictions, and a history that exists independent of the player. The dialogue is sharp, specific, and carries the weight of intentionality. When you talk to Indika’s characters, you’re not selecting from a tree of options; you’re making genuine choices about how you want to engage with someone’s problem. That’s the hardest part of game narrative to get right, and Indika’s developer proved they understood it at a granular level.

The player emotional investment mechanics in Indika work because every scene is authored with a clear purpose. A conversation isn’t just exposition or a quest marker; it’s character development, world-building, and moral complexity happening simultaneously. This is the opposite of procedural. It’s intentional. It’s shaped by a specific creative vision. But here’s where the limitations appear: scale. Indika’s story is linear enough that the developer could control every variable. You make choices, but the world doesn’t branch infinitely. Each branch can be authored because there aren’t a thousand of them. This is where the real player complaint emerges—dedicated players finish Indika and want more reactivity, more branching, more reasons to replay. The scripted approach hits a wall.

The $5 million AI funding makes sense as the next step precisely because Indika’s developer has already proven they understand narrative craft at a deep level. They’re not looking to replace their writers; they’re looking to amplify them. Where manual dialogue and scripting hit their walls is in the spaces between authored moments—the ambient NPC dialogue, the reactive comments when you walk past someone, the continuity threads that connect disparate choices across a 30-hour game. An NPC in Indika’s next project might remember your moral choices from Act One and reference them in Act Three, not because a writer anticipated that specific moment, but because an AI system trained on the developer’s dialogue patterns has internalized who you are as a player and can generate contextually appropriate dialogue on the fly. That’s not replacing authorship; that’s extending it.

Narrative AI and Procedural Storytelling: The Tech Reshaping Indie Games

Here’s where most gaming journalism gets fuzzy, so let’s be specific: narrative AI and procedural storytelling are not the same thing, and conflating them will break your understanding of what this funding actually enables. Procedural storytelling generates story beats algorithmically—think of how No Man’s Sky generates planets or how Spelunky generates dungeon layouts. It’s rule-based, mathematically elegant, and totally soulless. Narrative AI, by contrast, uses large language models (LLMs) like GPT-4 or custom-trained models to generate dialogue and story content that mimics human writing patterns. The difference is critical.

Old dialogue trees—the Bethesda Oblivion model that dominated RPGs for two decades—worked like a flowchart. You’re at Node A, the NPC says “Line 1A,” you pick from four dialogue options, and the game branches to Node B, C, D, or E. Every path was hardcoded. Every line was written by a human. Scaling this system meant exponential writing work. In a game with 100 NPCs, each with 10 major interactions, you’re looking at 1,000 authored moments. Add player choice into that, and the numbers explode. Narrative AI flips this: you write the character’s voice, personality, and history once using a tool like Inworld AI or Character.AI. The AI generates dialogue that fits that voice, responding to player input and game state in real time. In a game like Baldur’s Gate 3, this already happens—but only where Larian could afford to tune and test the outputs. Indie studios lack that infrastructure, which is why commercial platforms matter.

Procedural narrative vs. hand-written is actually a spectrum, and the smart move—the one Indika’s developer is likely pursuing—is a hybrid. You write the major story beats, the character arcs, the thematic throughlines. The AI fills in the connective tissue: ambient dialogue, reactive comments, memory callbacks, personality flourishes. This is what players experience as “the NPC remembered me” moments. The developer built the foundation, but the AI generates the texture. Dynamic NPC memory systems like those in Inworld AI let players feel like their presence in the world matters without requiring the developer to write a thousand branching paths. Character.AI and Convai have both proven this works in controlled environments. The question is whether indie studios can integrate it without their games becoming uncanny or losing narrative coherence.

How AI Changes What You See and Feel in a Game Like Indika’s Next Project

Before and After: A Concrete Example

Before (Traditional Scripted System): In Indika, you make a choice in Act One to help Character A’s rival. The developer anticipated this choice and wrote a specific dialogue line for it. Forty hours later, you run into a minor NPC. If the developer didn’t specifically write for the scenario where you sided with the rival, that NPC will either: (a) act like they don’t know about your choice, breaking immersion, or (b) trigger a generic branching response the developer prepared. If you chose a dialogue option the developer didn’t anticipate, the NPC’s reaction feels jarring or doesn’t acknowledge your actual choice. The world feels authored only where the developer predicted your behavior.

After (AI-Enhanced System): In Indika 2 powered by Inworld AI or a similar platform, that same choice gets logged not just as a flag in the code, but as a memory in the NPC’s personality model. Forty hours later, you run into a minor character who knows about that choice. The system generates a contextually appropriate response: the NPC mentions the rivalry, maybe expresses skepticism about your loyalty, maybe offers you information based on their understanding of your allegiance. The dialogue feels authored because the AI has learned the character’s voice from the training data, and it’s responding to your actual history in the game, not a predetermined branch. The NPC might say something the developer never explicitly wrote, but it fits the character perfectly because the system understands that character’s values and speech patterns.

This is where immersion gains become tangible. NPCs remembering your past choices and referencing them naturally creates what game design calls “narrative coherence”—the feeling that the world is consistent and that your actions have weight. In traditional scripted games, this is brittle. Miss one dialogue option, and the NPC’s memory breaks. With AI, the system is flexible. It can generate dialogue that acknowledges your choice even if the developer didn’t specifically write for that moment. Dialogue that reacts to player emotion or playstyle becomes possible because the AI can analyze your recent actions and respond accordingly. If you’ve been playing aggressively, NPCs might treat you with wariness. If you’ve been diplomatic, they might open up. This isn’t new—games like Fallout: New Vegas did this with scripting—but AI makes it scalable for indie teams without requiring hundreds of conditional branches.

The emotional weight difference is significant. When an NPC in a traditional game references your choice, you know a developer wrote that moment specifically. It feels hand-crafted but limited. When an NPC in an AI-enhanced game references your choice in a way you didn’t anticipate, it feels like the world is actually responding to you—like the NPC is a real person adapting to your presence. That sense of being seen by the world is immersion-enhancing. The uncanny-valley risk is real—AI can generate weird, out-of-character responses if the system isn’t tuned properly—but Indika’s developer has proven they understand character voice at a deep level, which means they’re equipped to train and guide an AI system to stay within their intended tone.

The Indie Game AI Funding Boom: What This $5M Means for the Whole Sector

Indika’s developer landing $5 million for AI-enhanced narrative is not an isolated event; it’s part of a broader shift in indie game funding. Over the past 18 months, studios like Latitude (AI Dungeon), Convai, and Inworld AI have raised tens of millions specifically to build tools that make narrative AI accessible to developers who can’t afford to hire a team of writers. Meanwhile, indie studios are quietly integrating these tools. A studio like Terraforma or Wildermyth—already known for emergent narrative—is likely exploring how AI can expand their dialogue systems without losing the intentionality that makes their games special.

The tools are becoming commodified and accessible. Inworld AI offers character creation and dialogue generation that integrates with Unity. Character.AI has a developer API that lets you build custom NPCs. Convai focuses on voice-enabled NPC interactions powered by GPT-based systems. What these platforms share is a recognition that indie teams need narrative AI that doesn’t require custom training on massive datasets. You should be able to describe a character, set their values and voice, and have the system generate dialogue that fits. The adoption curve is accelerating because the barrier to entry has collapsed. Five years ago, you needed machine learning engineers and months of training data to get an NPC to generate coherent dialogue. Now, you can prototype it in a weekend with a commercial platform.

This is where AAA and indie approaches are diverging. AAA studios like Obsidian or Insomniac have the resources to build custom AI solutions, fine-tuned for their specific games, with human writers looped in at every step. Indie developers are adopting commercial platforms—Inworld, Character.AI, Convai—that offer standardized solutions. The indie approach is faster, cheaper, and more accessible, but it also means indie games might start to sound similar if they’re all using the same underlying AI model. The funding boom is essentially a race to solve that problem: how do you build narrative AI tools that are accessible and affordable but still allow for creative differentiation and authorial voice?

Concrete Examples: Games Already Using Narrative and NPC AI

AI Dungeon launched in 2019 and became the proof-of-concept for narrative AI in games, even if the execution was rough. The game uses GPT-2 and GPT-3 to generate story text based on player prompts. The results are wildly uneven—sometimes brilliant, often nonsensical—but the core mechanic proved that players would engage with AI-generated narrative if the framing was right. AI Dungeon’s strength is openness; you can do anything, and the AI tries to generate a response. Its weakness is coherence; the AI has no long-term memory of the story you’re building, so narratives often collapse into incoherence after a few turns. Still, millions of players have used it, and that adoption curve matters. The player complaint is consistent: AI Dungeon feels creative but unreliable, brilliant one moment and nonsensical the next.

Baldur’s Gate 3, Larian’s massive RPG, uses AI-assisted dialogue within a tightly controlled framework. The game’s writers used AI tools to generate dialogue variants and reactions, but every line was reviewed, edited, and approved by humans. The result feels authored because it is authored—the AI is a tool that amplifies the writer’s work, not a replacement for it. Players don’t experience Baldur’s Gate 3’s dialogue as AI-generated because it isn’t, technically. The AI was used in development, but what ships is human-curated content. This is the model indie developers should aspire to.

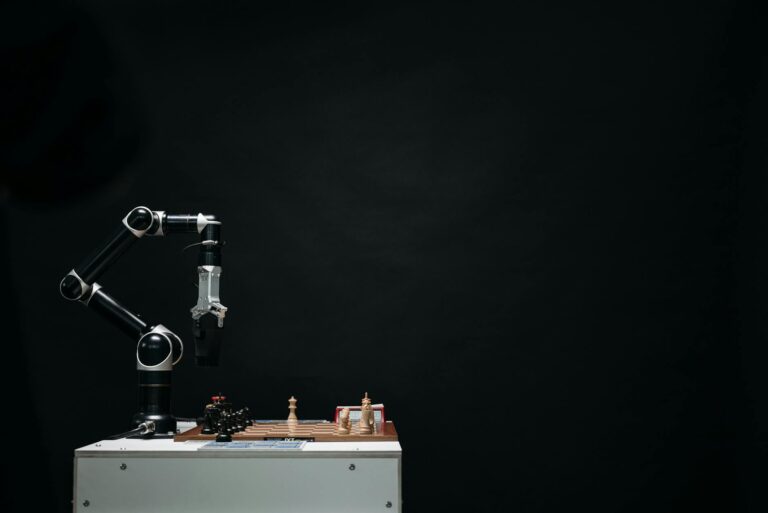

Creatures, a 1996 game that predates modern AI by decades, pioneered emergent NPC behavior through genetic algorithms. Each creature had a simple brain that learned from experience. The result was NPCs that felt alive because they had internal goals and limitations independent of the player. Creatures is often cited as the spiritual predecessor to modern narrative AI because it proved that players will invest emotionally in characters that feel autonomous, even if that autonomy is generated rather than scripted. The difference between Creatures and modern narrative AI is scale: Creatures could only handle a handful of creatures with limited behavior. Modern AI can handle dozens of NPCs with complex dialogue and memory.

Recent indie demos with AI NPCs are where the real signal is. Studios experimenting with Inworld AI and Character.AI have shown that you can create NPCs that remember player choices, react to player emotion, and generate contextually appropriate dialogue without losing character voice—as long as you invest in training the system properly. These aren’t shipping games yet, mostly because developers are still figuring out how to integrate AI dialogue into their broader narrative structures without it feeling tacked on. But the player experience is noticeably different from traditional scripted games. When an NPC references something you did three hours ago without the developer having written that specific moment, the sense of presence is uncanny—in the good way.

The Real Risks: Where Procedural Storytelling Can Fail Players

Let’s be honest: AI-generated dialogue can be terrible. AI hallucination—the tendency of language models to generate plausible-sounding but factually false or tonally inappropriate dialogue—is a real problem in shipped games. Microsoft’s Zo chatbot, released in 2016, started making offensive statements because it was trained on unfiltered internet text. In a game context, AI hallucination means an NPC suddenly says something completely out of character, references events that didn’t happen, or makes statements that break immersion. A character might claim they witnessed something the player knows they didn’t. The dialogue might shift tone mid-conversation. These moments are immersion-breaking in ways that a scripted game’s bugs aren’t, because players expect AI to be unpredictable—but not *this* unpredictable. This is the primary risk developers using Inworld AI or Character.AI face: the AI generating something that contradicts established lore or character.

Loss of authorial voice and intentionality is a subtler risk. A hand-authored game like Indika carries the fingerprints of its creators. Every line of dialogue, every character moment, every thematic beat has been shaped by humans with a specific vision. AI-generated content, even when well-tuned, has a tendency toward blandness. It averages toward the mean. It’s good at generating competent dialogue but struggles with the specific, weird, memorable moments that make a story stick with you. If Indika’s next project leans too heavily on AI generation, it might lose the distinctive voice that made the original special. The risk is that the game becomes technically impressive—NPCs remember you, dialogue is reactive—but emotionally hollow. Players would feel seen by the system but not moved by the story.

Unpredictable NPC behavior breaking immersion is the flip side of autonomy. If an NPC’s dialogue is generated rather than scripted, it can do things the developer didn’t anticipate. It might make logical jumps that don’t make sense within the game’s world. It might reference things that don’t exist in the game. It might generate dialogue that contradicts earlier statements because the AI doesn’t have perfect memory of previous conversations. These aren’t bugs; they’re features of how large language models work. They’re probabilistic systems, not deterministic ones. In a game context, that unpredictability can feel like the game is broken rather than alive. A player might ask an NPC a question and get a response that makes no sense in context, then ask the same NPC the same question five minutes later and get a completely different (also nonsensical) response.

Performance costs on smaller platforms are real and non-trivial. Running inference on a language model—generating dialogue in real time—is computationally expensive. A Nintendo Switch or mobile game can’t do this locally without serious optimization. That means relying on cloud APIs like those offered by Inworld AI or Convai, which introduces latency and requires internet connectivity. A player’s NPC conversation might have a half-second delay while the dialogue is being generated on a server. That’s a UX problem that breaks immersion. For indie developers targeting multiple platforms, this is a major constraint. You can’t ship an AI-driven game on Switch without solving the latency problem, and that’s technically non-trivial. This is why most AI-enhanced games are currently PC and console-only.

The player agency paradox is perhaps the deepest risk: more AI-generated content can paradoxically feel like less player agency. If an NPC’s dialogue is generated based on the player’s choices, but the player doesn’t understand the system’s rules, the dialogue might feel random. “Why did the NPC say that?” If the player can’t predict or understand how the system works, they can’t make informed choices about how to interact with it. This is why the best narrative AI implementations—the ones shipping in AAA games—have human writers looping in to ensure the AI-generated dialogue is coherent and predictable within the game’s logic. Without that editorial layer, AI dialogue systems can feel opaque and frustrating.

What Game Developers Actually Need From AI Tools Right Now

If you ask indie developers what they need from narrative AI tools, the answers are surprisingly consistent. First: dialogue generation that respects character voice. The system needs to understand that Character A is sarcastic and world-weary while Character B is earnest and optimistic, and it needs to generate dialogue that preserves those voices even when responding to novel situations. This is harder than it sounds. Most commercial dialogue generation tools work by training on examples, and the quality of those examples directly determines the quality of the output. A developer needs to be able to feed the system their character’s dialogue and have it learn that voice, not just generate generic NPC chatter. Inworld AI’s character card system attempts this, but the results are only as good as the training data developers provide.

NPC behavior systems that feel authored is the second big need. Developers want NPCs that have goals, schedules, and internal logic that feels intentional. An NPC shouldn’t just generate dialogue randomly; they should have reasons for saying what they say. They should have a daily routine. They should care about things. This requires more than dialogue generation; it requires a system that models NPC state—what they know, what they want, what they’ve learned from the player. Tools like Inworld AI are moving in this direction with their memory and relationship systems, but most indie developers are still figuring out how to integrate these systems into their engines without losing performance or narrative control.

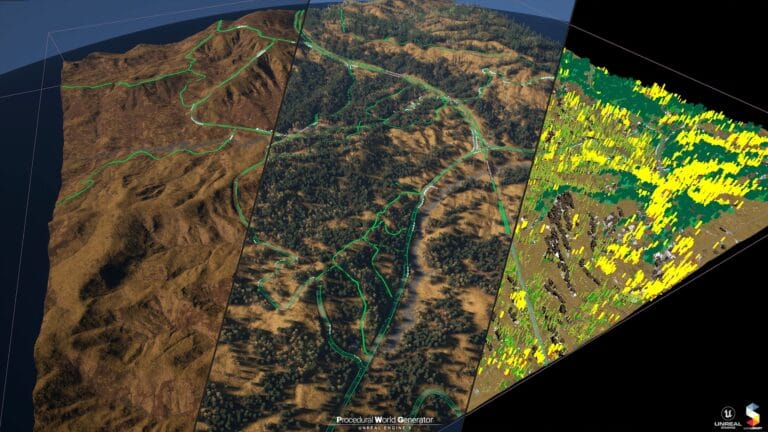

Tools integrated into Unity and Unreal are non-negotiable. Most indie games are built in one of these two engines. A narrative AI tool that requires custom integration or doesn’t have good SDK support is a non-starter for small teams. Convai has done well here with strong Unreal support. Inworld has good Unity integration. But the bar is still higher than it should be; a developer shouldn’t need to be a software engineer to integrate AI dialogue into their game. The integration process is still too manual, requiring developers to handle API calls, dialogue streaming, and state management themselves.

Cost and latency constraints shape every decision. Indie developers don’t have AAA budgets, so the cost per NPC interaction matters. If running dialogue generation costs $0.001 per line, and your game has 100 NPCs with 50 interactions each, that’s $5,000 just for dialogue—before optimization. Latency is equally critical. A half-second delay between a player selecting a dialogue option and the NPC responding feels broken. Cloud-based dialogue generation introduces that latency. Local inference on consumer hardware is possible but requires serious optimization. Developers need tools that are both affordable and fast, which is why local models are becoming more attractive even if they’re less sophisticated than cloud-based GPT-4.

Training data ownership is the legal and ethical minefield nobody talks about enough. When you feed your game’s dialogue into a commercial AI tool to train a custom model, who owns that data? Can the platform use it to train their base model? Can you retrieve it later? Can you migrate to a different platform? These questions matter for indie studios building long-term IP. If your game’s dialogue becomes part of a platform’s training data, and that platform shuts down or changes terms, you lose access to your own creative work. Developers need clear contracts and data ownership guarantees before they commit to any platform.

The Next Frontier: Where Indika’s Developer and Peers Are Headed

Indika’s developer hasn’t released a detailed roadmap for their next project, but the $5 million funding and the trajectory of narrative AI in indie games suggest a clear direction. The next frontier is AI-assisted world-building and quest generation. If dialogue can be generated, why not quests? Why not the ambient narrative that fills a game world—the books on shelves, the overheard conversations, the notes that tell environmental stories? A developer could define the world’s rules, tone, and themes, and have an AI system generate thousands of small narrative moments that fill the space between major story beats. This is already being explored in tools like Latitude’s AI Dungeon and experimental systems from studios like Anthropic.

Hybrid human-AI narrative design is the likely sweet spot. Developers write the major story, define character voices and world rules, then use AI to generate dialogue and ambient narrative. The developer reviews the outputs, edits for quality and coherence, and ships the refined version. This is more labor-intensive than pure procedural generation but less labor-intensive than hand-writing every line. It’s also where the creative control stays with the human developers while AI amplifies their output. Studios like Obsidian and Larian are already experimenting with this approach, and indie developers will follow once the tools mature. This is the model that preserves authorial voice while enabling scale.

Multiplayer and social AI is the longer-term frontier. What if NPCs in a multiplayer game could respond to groups of players differently? What if an NPC could generate dialogue that acknowledges the party’s composition and recent actions? This is technically hard—it requires real-time dialogue generation that considers multiple player states—but the player experience would be radically more immersive. Imagine a party of three players in a fantasy RPG, and every NPC interaction is tailored to your specific group’s history and composition. That’s the kind of scale narrative AI could enable for indie MMOs or co-op games. Convai’s multiplayer support is a step in this direction.

The bet Indika’s developer is making—and the one the broader indie dev community is following—is that narrative AI has matured enough to be a creative tool rather than a gimmick, and that players will feel the difference between a game that uses AI to amplify human authorship and a game that uses AI as a shortcut for actual writing.

Player Concerns and the Case for Transparency

Will AI writing feel soulless, more generic, and less emotionally resonant than hand-authored stories? This is the question every player asks when they hear about narrative AI in games. The honest answer is: it depends on implementation. AI-generated dialogue can feel generic if the system isn’t properly constrained to a character’s voice and the developer doesn’t edit the outputs for quality. But AI-generated dialogue can also feel authentic if it’s trained on strong examples and the developer uses it to expand on already-authored material. The risk is real, but it’s not inevitable. Games like Baldur’s Gate 3 prove that AI-assisted dialogue can feel emotionally true. The key is that the human developers remain in control of the overall narrative direction and character voice.

How much procedural is too much is a design question, not a technical one. A game that’s 80% AI-generated dialogue and 20% hand-authored story beats will feel different from a game that’s 20% AI-generated and 80% authored. The first might feel incoherent and generic. The second might feel rich and expansive. Developers need to be thoughtful about where they deploy AI and where they invest in hand-authored content. The major story moments, character arcs, and thematic beats should be authored. The ambient dialogue, reactive comments, and connective tissue can be AI-generated. This balance is where the best results will come from.

Player preference for human-authored stories is real and measurable. When players know a story was hand-written by humans, they engage with it differently than when they know it was procedurally generated. This is a psychological fact, not a technical limitation. It means developers have a choice: either they can use AI transparently and let players know which parts are AI-generated (which might hurt immersion), or they can hide the AI and hope players don’t notice (which risks backlash if discovered). The smart move is transparency with quality assurance. If the AI-generated dialogue is indistinguishable from authored dialogue, players won’t care. If it’s noticeably different, they’ll resent the deception.

Data privacy in AI-driven games is a real concern that hasn’t been addressed adequately by the industry. If a game is using cloud-based dialogue generation through Inworld AI or Convai, every conversation the player has with NPCs is being sent to a server. That data could be logged, analyzed, used to train future models, or sold to third parties. Players should know this is happening. They should have the option to opt out or play offline. Current privacy practices in gaming are already loose; adding AI dialogue systems makes the problem worse. Developers need to be transparent about data collection and give players control.

Disclosure of AI use in credits is a minimum standard that should become industry practice. If a game uses AI to generate dialogue, that should be disclosed—either in the credits or in a pre-release statement. Players deserve to know whether the dialogue they’re reading was written by humans or generated by machines. This isn’t about shaming AI use; it’s about informed consumption. Just like we disclose motion capture or voice acting, we should disclose AI generation. The game industry is already moving toward this—studios like Latitude have been transparent about AI use in AI Dungeon—but it needs to become universal practice.

Frequently Asked Questions

Does narrative AI make game stories feel more immersive or more generic and random?

It depends entirely on implementation. In Baldur’s Gate 3, AI-assisted dialogue feels seamless and emotionally authentic because Larian’s writers oversee the outputs and the system is constrained to specific character voices. In AI Dungeon, dialogue is often nonsensical because the system has no character framework and no human editorial oversight. The sweet spot is hybrid: developers write character voice and story structure, AI generates dialogue and fills connective tissue, developers review and refine. When done well, players feel more immersed because NPCs remember them and react naturally. When done poorly, it feels generic and random.

Which indie games are already using AI for dialogue and NPC behavior?

AI Dungeon has been using GPT-based text generation since 2019, though results are uneven. Several indie studios are experimenting with Inworld AI and Convai for NPC dialogue, but most haven’t shipped publicly yet—they’re in prototype or early access phases. Latitude (makers of AI Dungeon) is the most visible example of AI narrative in an indie game, but the space is still early. Most shipped games are still using traditional scripted dialogue, though that’s changing rapidly as tools mature and become more accessible.

Will procedural storytelling replace human game writers and narrative designers?

No—but it will change what writers do. AI is good at generating competent dialogue and filling narrative spaces, but it’s not good at creating the specific, emotionally resonant, thematically coherent moments that make stories memorable. The writers who thrive will be those who understand how to use AI as a tool—defining character voice, story structure, and thematic direction, then using AI to amplify their work. Writers who only know how to write individual dialogue lines will struggle. The job is evolving, not disappearing.

How much does it cost indie developers to integrate narrative AI into their games?

Integration costs vary widely. Using a commercial platform like Inworld AI or Convai typically runs $100-$500 per month for development access, plus per-interaction costs when the game is live (usually $0.001-$0.01 per dialogue generation). A game with 100 NPCs and 10,000 interactions per player could cost $100-$1,000 in runtime fees per 1,000 players. Custom implementation is much more expensive—tens of thousands of dollars—but gives more control. For indie developers, the commercial platforms are the only affordable option right now.

Can AI-generated dialogue actually capture the emotional depth of hand-written scenes?

AI can generate dialogue that feels emotionally appropriate if it’s trained on strong examples and constrained to a clear character voice. But true emotional depth—the kind that makes you remember a scene months later—requires intentionality and specificity that AI struggles with. AI averages toward the mean; humans can create outliers. The best approach is hybrid: humans write the major emotional beats and character arcs, AI generates supporting dialogue and reactions. That way you get the specificity and intentionality of human writing with the scale and flexibility of AI generation.