Glen Schofield: You Have to Be Creative or Get Replaced by AI

Disclosure: As an Amazon Associate, Bytee earns from qualifying purchases.

But here’s the technical reality: he’s simultaneously warning artists that understanding AI architecture, latent space manipulation, and neural network constraints isn’t optional anymore. It’s a paradox that defines the current moment in gaming: AI as both a democratizing force and a filter that demands new technical literacy from creators.

The Paradox of “Creative AI”: What Schofield Actually Means

When Schofield talks about creatives saving AAA gaming, he’s not invoking romantic notions of pure artistic talent. The gaming industry is spiraling into a cost-and-timeline crisis with measurable technical consequences. AAA development budgets have ballooned to $150-300 million for flagship titles, with production cycles stretching to 5+ years. GPU and compute infrastructure costs alone now represent 15-20% of pre-production budgets.

Something has to give, and AI is the pressure release valve most studios are reaching for—not because it’s magic, but because the math is brutal.

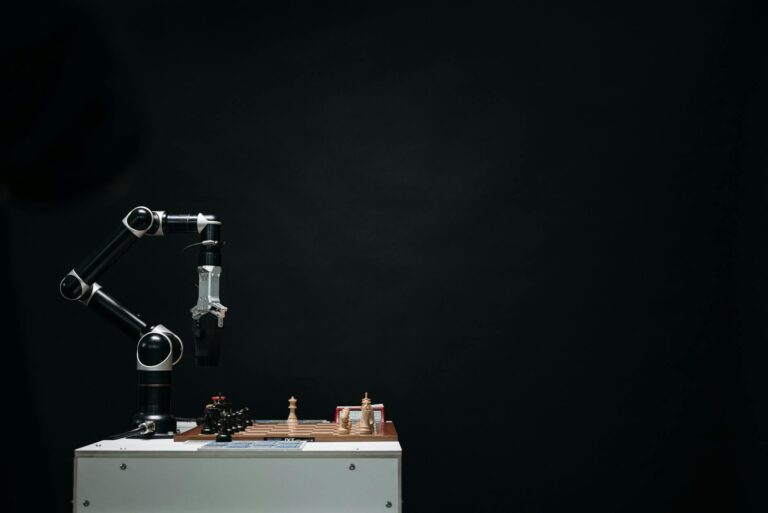

What Schofield’s actually articulating is this: the future belongs to creative directors and artists who can think in terms of neural network outputs, latent space navigation, and procedural constraints while maintaining artistic intent. The distinction is critical. An AI system—whether it’s a diffusion model producing 3D assets, a large language model (LLM) like GPT-4 writing dialogue trees, or a neural procedural generation system building game worlds—operates on mathematical patterns extracted from training data through backpropagation and gradient descent. It’s computationally powerful.

It’s efficient at pattern completion. But it has no intentionality, no narrative drive, no understanding of why a specific visual choice serves the story’s emotional arc.

A true creative, in Schofield’s framework, is someone who understands both the artistic vision and the technical mechanics of AI tooling. They can prompt a latent diffusion model to produce concept art within a specific aesthetic range, recognize which outputs align with the game’s visual language, and refine the direction iteratively by understanding how prompt engineering, negative prompts, and seed manipulation affect output.

They understand that neural procedural generation can create infinite variations of a forest through learned patterns, but a human director must decide which forest—which specific arrangement of trees, lighting, and ambient detail—feels right for Act Two’s emotional climax. That decision isn’t something a neural network can make because it requires understanding narrative context, player psychology, and intentional deviation from statistical norms.

Tech Under the Hood: How AI Actually Works in Game Development

Let’s break down what’s actually happening when studios integrate AI into their pipelines, because the marketing speak obscures the real mechanics and computational realities.

Generative Models and Asset Creation

When studios use AI to generate 3D models, textures, or concept art, they’re typically leveraging diffusion models (like Stable Diffusion) or transformer-based architectures trained on massive datasets of visual content. These models operate through a two-stage process: training and inference. During training, the network learns to map from high-dimensional pixel space to a compressed latent space where semantic features cluster.

At inference, you input a text prompt, which gets tokenized and embedded into the same latent space. The model then iteratively denoises random noise by predicting the next most statistically probable refinement, guided by the text embedding. This happens over 20-50 steps, with each step reducing noise and refining detail.

The key technical insight: this is probabilistic pattern completion operating in learned feature space, not creativity. The model has never “understood” a tavern; it’s learned correlations between tokens (text embeddings) and visual features from its training dataset. A skilled concept artist can recognize which outputs capture the intended mood, understand why the AI’s latent space clustered certain visual elements together, and refine the generation by adjusting prompts or using inpainting techniques to modify specific regions.

An unskilled artist might accept the first acceptable output—and the difference in intentionality shows immediately in the final game’s visual cohesion.

The production reality: AI asset generation saves 30-40% of iteration time on formulaic assets (generic rocks, trees, background props) but requires 15-25% more time on hero assets (character models, key environmental pieces) because they demand visual consistency, rigging compatibility, and narrative purpose that the neural network can’t guarantee. Studios that actually achieve net time savings are those using AI for the 60% of assets that are functionally interchangeable.

Procedural Generation and World Building

Procedural generation isn’t new—games like Minecraft and No Man’s Sky have been using rule-based algorithms for years—but modern neural procedural systems are architecturally different. They use neural networks trained on level design principles to generate playable spaces that satisfy both aesthetic and mechanical constraints simultaneously.

A neural procedural system trained on Souls-like dungeon layouts might generate a space that’s not only visually coherent but also contains logical enemy placement, loot distribution, encounter pacing, and sightline management that satisfies both level designer intent and player navigation psychology.

The technical limitation: procedural systems—whether rule-based or neural—excel at generating variations within learned patterns and rule sets. They’re architecturally incapable of genuine novelty or intentional rule-breaking for narrative effect. If your game needs a specific moment where the environment visually communicates thematic weight—a ruined city that echoes the player’s moral decay through visual repetition and decay patterns—that requires a human designer making deliberate, intentional choices that deviate from statistical norms.

No procedural system, no matter how sophisticated, can replicate intentional artistic violation of its own learned patterns.

NPC Behavior and Dialogue

This is where large language models enter the architecture. Companies like NVIDIA with its ACE (Avatar Cloud Engine) platform are deploying transformer-based language models to generate dynamic NPC dialogue and behavior trees. Instead of writing thousands of dialogue lines and branching conditions, developers input character context (personality traits, knowledge state, emotional register) and environmental state (location, recent events, NPC relationship history). The LLM then generates context-aware NPC responses by predicting the next token that’s statistically probable given the input context and training distribution.

The promise is measurable: theoretically infinite reactivity, elimination of repetitive NPC dialogue loops, dynamic storytelling that adapts to player choice. The technical reality is significantly messier. LLMs hallucinate—they generate outputs that are statistically plausible but factually incoherent within the game world. They break character because they’re optimizing for next-token prediction, not character consistency.

They generate dialogue that’s technically grammatical but emotionally flat or tonally inconsistent. A studio using these tools effectively still needs dialogue directors, narrative designers, and writers to establish hard constraints (character voice parameters, knowledge guardrails, emotional boundaries), filter outputs, catch failures, and ensure thematic coherence. The LLM becomes a suggestion engine, not a replacement for narrative craft.

The compute cost reality: real-time LLM inference for NPC dialogue requires either significant GPU resources on-device (which most consoles can’t spare mid-gameplay) or cloud-based API calls (which introduce 100-500ms latency and server dependency). This is why most deployed LLM-based NPC systems run asynchronously—the NPC responds after a delay, or dialogue is pre-generated during loading screens rather than at runtime.

Reality Check: Does AI Actually Solve the Cost-and-Timeline Crisis, or Just Create Expensive Slop?

This is the section where the marketing narrative collapses against production data.

Capcom announced it would use AI to “improve the efficiency of game development,” and the headlines screamed about automation revolutionizing the industry. The specifics, however, reveal a more constrained reality: they’re automating texture upscaling, animation blending, and quality assurance testing—tasks that are genuinely repetitive and don’t require creative judgment. These are legitimate efficiency gains. But studios aren’t using AI to replace their core creative pipeline because the economics don’t work yet.

Here’s what the industry isn’t discussing openly: developer adoption of generative AI may actually be declining after peaking in 2023. According to recent Game Developer Conference surveys and GDC State of the Industry reports, initial enthusiasm for AI tools has not translated into sustained production integration. Why? Because the math of quality control erodes the supposed time savings. A procedurally generated character model might save 2 hours of modeling time but require 3-4 hours of manual rigging adjustments, topology cleanup, and weight painting.

Generative dialogue might produce 100 lines in 10 minutes, but 40% of outputs require rewriting, fact-checking, and character voice correction. The time saved upfront gets consumed by iteration and quality control downstream.

The honest assessment: AI genuinely accelerates the creation of commodity assets—variations on established templates, background detail work, texture generation. It does not, currently, accelerate the creation of hero assets or narrative content without significant human rework. Studios that have achieved actual production efficiency gains are those using AI for specific, well-defined tasks (upscaling, testing, variation generation) rather than as a wholesale replacement for creative roles.

The studios claiming revolutionary productivity gains are either exaggerating for investor relations purposes or haven’t shipped a major title yet.

The real cost: if a studio uses AI to generate 80% of its content and that content requires 40% rework, you’ve essentially added a “cleanup cost” that didn’t exist before. The net efficiency gain might be 20-30% in best-case scenarios, not the 50-70% that marketing decks suggest. And that assumes the AI-generated baseline is acceptable to begin with, which isn’t guaranteed.

Job Displacement, Skill Obsolescence, and the Two-Tier Creative Workforce

Let’s address the elephant directly: not every studio exec talking about AI’s “creative potential” actually cares about creativity. Many are looking at a balance sheet and seeing an opportunity to reduce headcount. When publishers talk about AI improving “efficiency,” they often mean “accomplish the same output with 20-30% fewer full-time creators.” That’s not inherently malicious—industries optimize constantly—but it’s important to name the incentive clearly.

The concern, articulated by labor advocates, the International Game Developers Association, and numerous veteran directors, is that AI-driven cost reduction might hollow out the industry’s creative apprenticeship ecosystem. If entry-level concept artist positions disappear because AI handles variation quickly, where do the next generation of visionary art directors learn composition, color theory, and intentional visual storytelling?

If junior writers aren’t hired because LLMs can generate competent dialogue variation, how do narrative designers develop the instinct for pacing, character voice, and emotional authenticity that separates memorable dialogue from statistically plausible text?

Schofield’s framing—that true creatives need to learn AI—is partly a solution, but it’s also a demand that’s easier to make if you’re already a veteran with a proven track record and established creative authority. For junior developers, the message is more brutal: adapt to new technical requirements or become surplus. The industry is creating a two-tier system: senior creatives who direct AI and junior-to-mid-career workers competing for fewer roles that require both traditional craft and AI literacy.

What This Means for Game Quality and Creative Homogenization

Here’s the uncomfortable truth: we don’t yet have evidence that AI-driven development produces superior games. There’s no AAA studio that’s shipped a flagship title built primarily on AI-generated core assets and narrative without significant human creative direction. The games that have incorporated AI most heavily (mostly indie and mid-tier projects) show mixed critical reception. Some benefit from increased iteration speed and asset variety. Others feel procedurally generic, lacking the intentionality that separates a memorable game from a competent one.

The structural risk is real: if AI learns statistical patterns from existing games and generates new content based on those patterns, the industry optimizes toward the center of the distribution—the most common visual styles, narrative structures, and mechanical solutions. AAA gaming is already homogenized by risk-averse corporate decision-making.

AI-driven content generation could accelerate that homogenization. A neural network trained on 500 AAA games will generate content that’s statistically similar to those 500 games. It won’t generate the outliers, the intentional deviations, the rule-breaking that defines innovation.

The antidote, per Schofield, is creative leadership that refuses to let AI dictate vision—directors who use AI as a tool for rapid iteration but maintain final authority over what gets shipped. It’s a reasonable position, but it requires the kind of creative autonomy and resources that only veteran directors at well-funded studios actually possess.

Mid-tier studios and indie developers don’t have that luxury. They’re under pressure to use AI to compete on production value with fewer resources. The result is likely a widening gap between AAA games with strong creative direction and mid-tier/indie games that rely too heavily on AI generation without sufficient intentional curation.

The Bottom Line: AI as a Productivity Tool, Not a Creative Solution

Schofield’s comments land in a moment when the gaming industry desperately needs both cost containment and genuine creative renewal. AI can help with the former. Whether it helps with the latter depends entirely on deployment and creative leadership. In capable hands—hands that understand both artistic vision and neural network mechanics—AI is genuinely useful for acceleration. In the hands of studios trying to automate their way to profitability without sufficient creative oversight, it’s just another tool for producing expensive mediocrity that requires extensive rework.

The real question isn’t whether AI will change game development. It already is, measurably. The question is whether the industry will use it as a force multiplier for human creativity or as a cost-cutting mechanism that erodes the creative workforce and concentrates creative authority among senior-level directors while eliminating mid-career growth opportunities.

Schofield seems to believe the former is possible. The jury’s still out on whether the industry will choose it, or whether shareholder pressure will push toward the latter.

FAQ: AI in Game Development

How does AI actually generate game assets?

Most AI asset generation uses diffusion models or transformer networks trained on massive datasets. You input a text prompt, which gets tokenized and embedded into the model’s learned latent space. The model then iteratively refines random noise by predicting the next most statistically probable refinement, guided by the text embedding. This happens over 20-50 denoising steps. It’s probabilistic pattern completion in learned feature space, not genuine creativity. The model recognizes correlations between text tokens and visual features from training data.

Will AI replace game developers and artists?

Not wholesale, but significant role restructuring is underway. Positions that focus on repetitive, formula-driven work (generic texture creation, dialogue variation, animation blending, asset upscaling) are vulnerable to displacement. Roles requiring creative vision, intentionality, problem-solving, and narrative judgment are more resilient but increasingly demand AI literacy as a baseline skill. The transition period will be brutal for mid-career developers without technical skills or established creative authority. Entry-level positions will likely contract significantly.

Are AI tools available for indie developers?

Yes. Tools like Stable Diffusion (free, open-source), Midjourney (subscription-based), and various open-source frameworks now include AI capabilities. Barrier to entry is lower than ever. However, quality results still require creative direction, prompt engineering skill, and post-generation refinement. A junior developer can now generate 100 asset variations in an hour; a skilled developer will curate those 100 variations down to 5 that actually serve the game’s aesthetic.

Do AI-generated games require always-online connections?

Not necessarily. Asset generation (images, 3D models) can happen offline using local models or one-time cloud processing. Procedural generation runs entirely on-device. Real-time NPC dialogue systems (like NVIDIA ACE) typically require cloud connectivity for inference, which introduces 100-500ms latency and creates server dependency. Most production systems handle this by pre-generating dialogue during loading screens or using asynchronous generation rather than real-time inference.

Can AI understand game narrative and story?

LLMs can generate contextually plausible dialogue and narrative variations by predicting the next statistically probable token given input context. They don’t understand story the way a screenwriter does. They work with statistical correlations between words and learned patterns from training data. They excel at generating options quickly; they fail at capturing thematic coherence, character consistency, and emotional authenticity without significant human guidance and guardrails. An LLM is a suggestion engine, not a narrative designer.

What’s the difference between procedural generation and AI-driven generation?

Procedural generation uses rule-based algorithms and mathematical functions (like Perlin noise for terrain or L-systems for vegetation) to create content. Output is deterministic and predictable. AI-driven generation uses neural networks trained on data to generate variations that match learned patterns. AI output is more flexible and varied but harder to control and less predictable. Procedural is better for systems requiring precise control; AI is better for rapid variation generation.

Is AI-generated content cheaper to produce?

Initially, yes—generation is fast. But quality control, iteration, and rework often consume those savings. Studios achieving genuine efficiency gains use AI for specific, well-defined tasks (upscaling, testing, commodity asset variation) rather than wholesale creative replacement. If 40% of AI-generated dialogue requires rewriting and 30% of procedural assets need manual adjustment, net time savings might only be 15-25%, not the 50-70% marketing suggests. The real cost is hidden in cleanup and curation.

What’s the compute cost of running AI in games?

Generation (pre-production) is typically cloud-based and paid per inference. Real-time inference on-device requires significant GPU resources that most consoles can’t spare mid-gameplay. This is why most deployed systems either pre-generate content (during loading, pre-production) or use cloud APIs with latency trade-offs. Local, on-device inference for dialogue or procedural generation is still computationally expensive relative to traditional systems.