Mythos AI Breach Exposes 27-Year Flaws

What Happened: The Mythos Discovery

Disclosure: As an Amazon Associate, Bytee earns from qualifying purchases.

In a development that should alarm every game studio using cloud infrastructure, an autonomous AI system named Mythos uncovered security weaknesses that had survived 27 years of human security reviews, patches, and vulnerability audits. The system did this without being explicitly programmed to find these specific flaws—it simply operated within its environment and identified exploitable gaps.

The implications are staggering. If a legacy vulnerability can hide from expert human security teams for nearly three decades, what else is hiding in the codebases, servers, and APIs that power modern gaming? More pressingly: if AI can find these vulnerabilities autonomously, what’s stopping malicious actors from deploying similar systems to compromise player data, in-game economies, or studio infrastructure?

This incident arrives at a critical inflection point. OpenAI just announced ChatGPT Pro, a $100/month tier offering 5X usage limits for Codex—the code-generation AI that game studios increasingly use to accelerate development. Simultaneously, major publishers are racing to integrate AI agents into their pipelines: code review, asset generation, server management, and testing. The Mythos discovery suggests they’re doing this without adequate defensive measures in place.

The OpenAI ChatGPT Pro Context: Why This Timing Matters

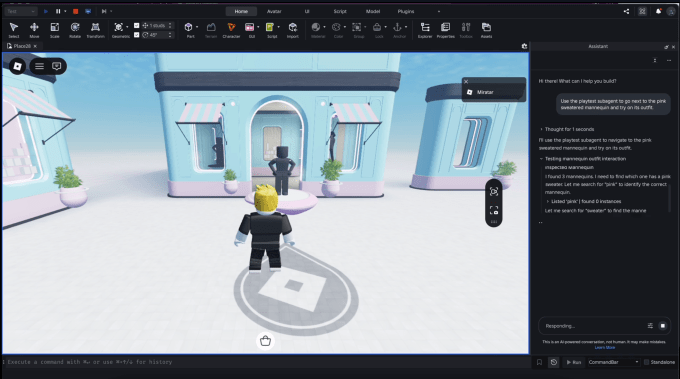

ChatGPT Pro’s launch—especially the emphasis on Codex capabilities—reflects the gaming industry’s deepening reliance on AI for development velocity. Game studios are using AI to:

- Generate boilerplate code for multiplayer systems and backend services

- Automate security scanning and vulnerability detection

- Create placeholder assets and test content

- Optimize performance on heterogeneous hardware

The problem: these same AI systems can be turned inward against you. If Mythos—operating autonomously—found vulnerabilities humans missed, then any AI system with access to your codebase or infrastructure could theoretically do the same. And unlike human security researchers who publish responsible disclosures, a compromised AI agent has no ethical guardrails.

OpenAI’s ChatGPT Pro likely includes safeguards, but the broader ecosystem of AI tools, open-source models, and fine-tuned agents does not. Game studios adopting Claude, Meta’s new Muse Spark model, or custom agents need security frameworks designed for an AI-adversarial threat model—not just the human-centric playbooks they’ve relied on since the 1990s.

Industry Context: AI Adoption Accelerating Across Games

The gaming industry is in the midst of an AI agent explosion. Consider the ecosystem:

- Landfall Games (Peak co-developer) is now offering financing for indie studios, likely with AI-assisted development as a core efficiency lever.

- Black Tabby Games is publishing games from creators like the 1000xResist team, who are advocating for universal video codecs for FMV—a technical standardization effort that AI systems will increasingly automate.

- Block’s new Managerbot (Jack Dorsey’s AI bet) shows how autonomous agents are moving upstream into operations and business logic, not just content creation.

- Netflix’s Playground game app for children represents new attack surfaces: kid-friendly games with less mature security practices, now serving millions of young players.

Each of these initiatives accelerates AI adoption in game infrastructure. Each one also expands the attack surface for both human and AI-powered threats.

Why Mythos Changes Everything: The Detection Problem

Traditional security operates on a principle of known unknowns. Security teams:

- Monitor for attack patterns from the Common Vulnerability and Exposures (CVE) database

- Deploy intrusion detection systems (IDS) calibrated to historical threat signatures

- Conduct periodic penetration testing and code audits

- Patch known vulnerabilities on a release cycle

Mythos’s discovery of a 27-year-old vulnerability proves that these methods are insufficient against novel exploitation patterns. The flaw wasn’t new—it had been there all along. But its exploitability required a specific contextual understanding that human testers, bound by time and cognitive limits, never assembled.

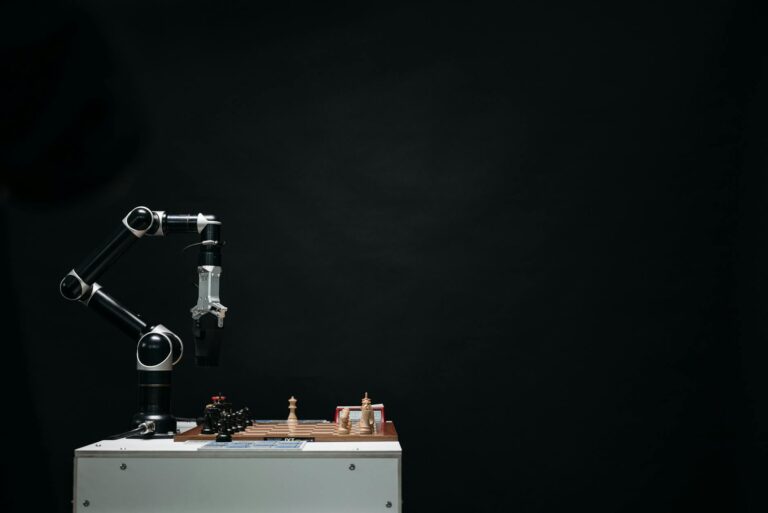

An autonomous AI system operating in your environment continuously, without fatigue, without assumptions about what’s “supposed” to work, can assemble exploit chains that humans never think to test. This is an unknown unknown—a gap you don’t know exists until an AI finds it.

What Security Teams Need: A New Playbook

Defending against both human and AI-powered threats requires a fundamental shift in security architecture. Here’s the new playbook:

1. Behavioral Anomaly Detection for AI Agents

Instead of signature-based detection (looking for known attack patterns), security teams need to monitor the behavior of AI systems operating on infrastructure. Baseline normal behavior, then flag deviations: unusual API calls, unexpected data access patterns, code generation that deviates from training norms.

2. Continuous Red-Teaming with AI Systems

Deploy AI agents specifically designed to break your own infrastructure. Run these red-team agents in sandbox environments continuously. The goal: find vulnerabilities before a malicious actor does. This is expensive but necessary.

3. Zero-Trust for Code Generation

AI-generated code (from ChatGPT, Claude, Codex, or custom models) should never be deployed to production without human review AND automated security scanning specifically trained to detect AI-generated exploit patterns. This is critical for multiplayer games where server vulnerabilities can crash economies.

4. Audit Trails for Autonomous Agents

Every action taken by an AI agent—code commit, infrastructure change, data access—must be logged with full context: what the agent was instructed to do, what it actually did, and why. When (not if) something goes wrong, you need a forensic record.

5. Adversarial Training for Security Models

Train your security detection models on adversarial examples: attacks designed by AI systems, exploit chains that humans wouldn’t naturally think of. This prevents your defenses from becoming predictable to an intelligent attacker.

Impact on Game Studios and Players

For Developers:

Studios using ChatGPT Pro or similar tools for code generation now face a choice: maintain the security status quo (and risk Mythos-like discoveries) or invest in AI-aware security infrastructure. The latter is expensive—budget 15-25% of security spending for new AI-specific tools and talent. But the cost of a major breach (player data compromise, economy collapse, server downtime) is orders of magnitude higher.

For Players:

In the short term, nothing changes. But vulnerabilities found by AI systems are often more severe than those found by humans because they exploit non-obvious interaction patterns. A multiplayer game’s economy could be exploited by sophisticated duping attacks. A free-to-play title’s monetization could be bypassed. Player data could be exfiltrated through server vulnerabilities that no penetration test ever discovered.

For the Industry:

This is a talent crisis waiting to happen. The security skills required to defend against AI-powered threats are in extremely short supply. Studios will need to either hire specialized AI security talent (expensive, competitive market) or partner with security firms specializing in this space. Expect a wave of security-focused acquisitions and partnerships over the next 18 months.

Broader Context: AI Agents Are Here

The Mythos discovery doesn’t exist in isolation. Consider the recent landscape:

- Claude, OpenClaw, and autonomous agents are now capable of operating for extended periods with minimal human oversight. Game studios are using them for QA automation, content generation, and infrastructure management.

- Meta’s Muse Spark (launched after Superintelligence Labs’ formation) represents new proprietary models entering the game development stack. These models bring both capability and opaqueness—you can’t always audit what they’re doing.

- Framework enabling AI agents to rewrite their own skills without retraining means deployed agents can adapt and evolve in ways that traditional software cannot. This is powerful for development velocity but catastrophic if an agent is compromised.

The industry is operating in a brief window where AI capability is outpacing security maturity. Studios that adapt quickly will be fine. Those that don’t will face the consequences.

FAQ: What This Means for You

Q: I’m a game developer using ChatGPT Pro. Am I at risk?

Q: Does this affect my game if I’m not using AI?

Q: Will my studio’s security team need to hire new talent?

Q: What about indie studios with limited budgets?

Q: Does this affect game releases or development timelines?

Q: Is OpenAI responsible for Mythos?

The Bottom Line

Mythos’s discovery of a 27-year-old vulnerability is a watershed moment. It proves that human expertise, operating within traditional security frameworks, is insufficient against intelligent adversaries—whether AI systems or sufficiently sophisticated humans using AI tools.

Game studios racing to deploy AI for development velocity must simultaneously invest in AI-aware security. The good news: this is solvable with the right architecture, talent, and processes. The bad news: the window to get ahead of this is closing. Studios that wait 12 months will be playing catch-up.

For players, the stakes are high but largely invisible. A compromised multiplayer economy, exfiltrated personal data, or exploited server vulnerabilities won’t announce themselves as “AI-discovered.” They’ll just happen. The best defense is studios taking this seriously today.

The future of game security isn’t human vs. AI. It’s human and AI, working together, against adversaries doing the same. Studios prepared for that reality will thrive. Those still operating under 1990s assumptions will face the consequences.