Houdini UE5 Procedural Generation: How AI Builds Game Worlds

Disclosure: As an Amazon Associate, Bytee earns from qualifying purchases.

Imagine walking through a game world where every flower, rock formation, and overgrown ruin looks hand-placed—but was actually generated by an algorithm in minutes instead of weeks of grinding asset work. That’s not science fiction anymore. Houdini and Unreal Engine 5 are making it real right now, and the shift is fundamentally changing how studios build dense, intricate environments at scale. We’re talking about procedural generation that doesn’t feel like a Minecraft-style randomizer—this is controlled, authored chaos that respects artistic intent while crushing production timelines.

What Is Houdini UE5 Procedural Generation and Why Gamers Should Care

Houdini is a procedural 3D software that’s been the secret weapon of VFX studios for decades—think the destruction in Marvel movies, the landscapes in sci-fi blockbusters. Now Epic Games has integrated Houdini Engine directly into Unreal Engine 5, which means game developers can harness that same algorithmic power to build worlds instead of hand-sculpting every asset one by one. The core idea is deceptively simple: instead of an artist spending three weeks modeling, texturing, and placing 500 unique flower variations across a lotus garden, they write a procedural ruleset that generates those flowers in minutes, with infinite variation baked in.

This solves a massive pain point in modern game development: repetition at scale. Open-world games need density—forests with thousands of trees, ruins with countless crumbling stones, gardens with plants that look alive and varied. Doing that by hand is economically insane. Houdini UE5 integration lets artists define the rules once (flower petal count, stem curvature, color variation, placement logic) and then generate thousands of instances with controlled randomness. The result feels hand-crafted because it was—just at the algorithmic level, not the polygon level. Every asset respects the artist’s intent while avoiding the uncanny repetition that breaks immersion in procedurally generated worlds. When Remedy Entertainment shipped Control, they used this exact approach for the brutalist architecture—procedural generation of hallway variations that all felt cohesive despite infinite variation.

For players, this means environments that are denser, more detailed, and more varied without the performance hit of storing millions of unique hand-modeled assets. It’s the difference between a forest that feels populated versus a forest that feels like the same tree copy-pasted 200 times. Star Wars Outlaws demonstrates this in its open-world cities, where procedurally generated layouts and architectural details create sprawling urban environments that would’ve required months of manual placement work. The shift from purely manual asset creation to algorithm-assisted design is reshaping what “detailed environment” actually means in AAA and indie games.

The Lotus Scene: Breaking Down How Procedural AI Built It

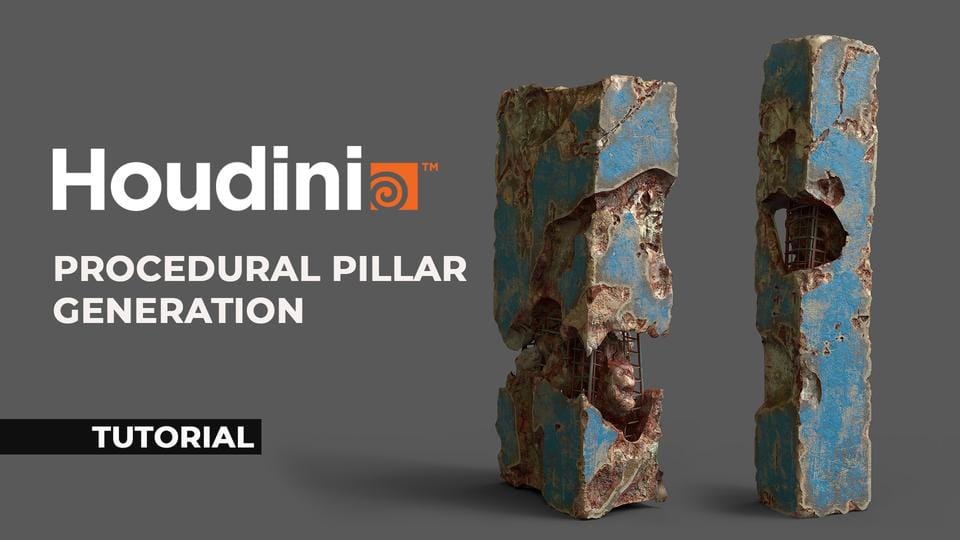

Let’s walk through a concrete example: a lotus garden scene. In the old pipeline, an environment artist would model a lotus flower (maybe 5,000 polygons), create three color variations, place them manually across a terrain, adjust individual petals where they clip through rocks, and spend days tweaking the composition. Total time: two to three weeks for one scene. With Houdini UE5, the process looks completely different. The artist builds a single procedural graph—think of it like a visual programming flowchart where each node is an instruction. One node says “create a lotus petal with these constraints.” Another says “randomize petal curvature between X and Y degrees.” Another says “generate 50-200 flowers per square meter, avoiding overlaps.” Another says “color each flower using this palette, with slight hue variation.”

The artist then plugs in parameters—sliders and input fields—that control the entire generation. Want denser flowers? Slide the density parameter up. Want more color variation? Adjust the hue range. Want petals more delicate? Tweak the thickness multiplier. UE5 then renders the output in real-time using Nanite (Epic’s virtualized geometry system) to handle the polygon density without crushing frame rates. What used to take 21 days now takes 2-3 hours, including iteration. The artist can see changes instantly, experiment with dozens of variations, and ship a scene that feels hand-crafted but was algorithmically generated. That’s the fundamental value proposition: authorship speed without sacrificing detail or control.

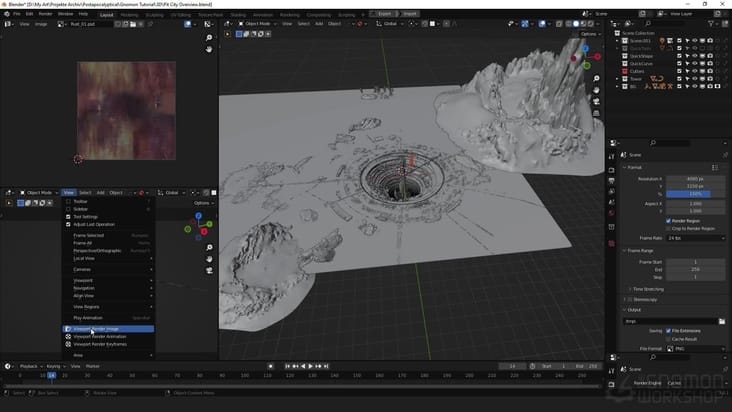

The node graph itself looks intimidating at first—Houdini’s interface is dense with hundreds of possible operations. But the core concept is straightforward: data flows left to right through nodes, getting transformed at each step. A mesh input gets subdivided, twisted, colored, scattered across a landscape, and output as a renderable asset. Artists new to Houdini compare it to shader graphs they already know from game engines—similar logic, way more power. And because Houdini Engine runs inside UE5, the feedback loop is instant. No exporting, no waiting for reimports. Tweak a parameter, see the result in the viewport immediately.

How It Works: The Tech Behind Procedural World Generation

Procedural generation and traditional modeling are opposites in philosophy. Traditional modeling: you decide what you want, you build it, it’s done. Procedural generation: you define the rules that build it, and the algorithm explores possibilities within those rules. In Houdini, this happens through a node-based workflow that’s conceptually similar to how game logic works in Blueprints or C++. Each node is a function. Nodes chain together. Data flows through the chain. The output is your final asset. The difference from random generation—which is what No Man’s Sky does, where you get infinite variation but no artistic authorship—is that every parameter is controlled. The artist isn’t gambling on randomness. They’re directing it.

Here’s the technical foundation: you feed Houdini input data (a base mesh, texture maps, rules, constraints) and the procedural graph transforms it. A rule might be “if this surface has a slope greater than 45 degrees, scatter rocks here instead of plants.” Another rule: “create moss only on surfaces facing north and with sufficient moisture.” These aren’t magic—they’re just conditional logic, the same way game AI decides whether an NPC should attack or flee. Houdini has hundreds of built-in nodes for common operations (scatter, subdivide, deform, color, instance, simulate), and artists chain them together to create complex behavior from simple rules. It’s programming, but visual and iterative. When you see the environmental storytelling in Helldivers 2’s procedurally generated missions—where different biome types create distinct visual and tactical layouts—you’re seeing this logic in action. Each biome has its own rule set that determines how terrain, obstacles, and spawning points are distributed.

When UE5 receives the procedural output, Nanite and Lumen do the heavy lifting. Nanite is a virtualized geometry system that lets you render massive polygon counts without traditional memory constraints. Lumen is real-time global illumination that calculates how light bounces through complex geometry instantly. Together, they make procedurally generated environments—which naturally have huge polygon counts—performant on current-gen hardware. Without Nanite, a procedurally generated forest with millions of leaves would crater frame rates. With it, you get visual density that rivals hand-crafted scenes. This is the critical insight: procedural generation only became viable for games when rendering technology caught up. Houdini’s been around for 30 years, but Houdini UE5 is new because UE5’s rendering tech finally made it practical.

What Changes for Players: Real Gameplay Impact

Before the procedural shift: You’re exploring an open-world game and you notice the pattern. That cluster of three rocks appears again 500 meters north. The same three trees in the same configuration show up on the eastern ridge. Enemies spawn in the same locations with the same patrol routes. The world feels efficient but hollow—designed, not lived-in. Hand-crafted environments are gorgeous but sparse because artists can only make so much content. Repetition is the tax you pay for scope. Performance is stable because assets are duplicated, not multiplied. In games like Skyrim or earlier open-world titles, you could literally walk for 10 minutes and see the same rock formation repeated dozens of times, breaking immersion.

After procedural AI: You’re in the same open world and every rock formation is unique. Trees cluster differently based on terrain and biome rules. Ruins decay in varied ways—some collapsed on one side, others on multiple sides, each with unique rubble patterns. The environment feels authentically dense because it is—thousands of variations generated from rules, not copied from a handful of hand-made assets. The world has scope and detail without feeling manufactured. Performance stays solid because Nanite virtualizes the polygon load. The development cycle is faster: instead of hiring 15 environment artists to manually place 10,000 assets, you hire 5 procedural designers to write the rules that generate them. In Star Wars Outlaws, players experience sprawling cantina districts and spaceport layouts that feel unique and lived-in because procedural generation creates architectural variation while maintaining visual coherence—something that would’ve required massive manual asset creation in earlier games.

Real gameplay examples: Star Wars Outlaws uses procedural generation for its open-world cities, creating varied layouts and architectural details that would’ve taken months to hand-build. Helldivers 2’s procedurally generated missions use algorithmic level generation to create different combat arenas each run—players get variety without the repetition that plagued earlier roguelike-style shooters. Control’s brutalist interiors benefit from procedural generation of room and corridor variations that respect the game’s architectural language while avoiding the visual repetition that would’ve felt cheap in a hand-crafted environment. The immersion gain is measurable: players perceive procedurally generated environments as more “alive” when the variation is authored (procedural with rules) rather than random (no constraints). The difference is whether the game feels designed or chaos-generated.

What Game Studios Are Building With Houdini and UE5

AAA studios have already integrated Houdini UE5 into their pipelines. Remedy Entertainment used procedural generation for Control’s intricate brutalist architecture. The team used Houdini to generate variations of hallways, rooms, and structural elements that respect the game’s architectural language while avoiding repetition. What might’ve taken months of manual modeling took weeks of procedural design—a significant time savings on a mid-sized team. Epic Games itself has showcased Houdini UE5 workflows in their demo projects, and several unannounced AAA titles in development are built on this pipeline. The adoption is accelerating because studios see the ROI: faster iteration, denser content, lower production risk.

Indie developers have access too. Houdini Indie license is free for projects under $100K revenue, which opens procedural generation to solo developers and small teams. This is a seismic shift in game development democratization. Five years ago, procedural generation at this quality was exclusive to studios with $50M+ budgets and specialized VFX talent. Now any indie dev can download Houdini, learn the node graph, and generate assets that rival AAA quality. You’re seeing this play out in early access games and game jams—developers experimenting with procedural environments because the barrier to entry collapsed. Studios like Supermassive Games are exploring real-time procedural generation during gameplay, pushing the boundaries of what’s possible in interactive environments.

Key tools and middleware supporting this ecosystem:

- Houdini Engine for Unreal – Direct integration, real-time procedural generation in-editor

- World Creator – Terrain and landscape generation plugin optimized for UE5

- SpeedTree – Procedural vegetation system that integrates with Houdini workflows

These tools are actively developed and updated. Houdini Engine for UE5 is stable and production-ready. World Creator is in active development with monthly updates. The ecosystem is maturing, which means studios betting on procedural generation today aren’t betting on beta technology—it’s established, proven, and competitive.

The Catch: Limitations and Player Concerns

Procedural generation isn’t magic, and the limitations are real. First: performance on older hardware. A procedurally generated forest with thousands of unique tree instances, each with full geometric variation, can still tank frame rates on last-gen consoles or budget gaming PCs. Nanite helps, but it’s not a get-out-of-jail card. Developers still need to optimize, LOD (level-of-detail) their assets, and make hard choices about density versus performance. A game that ships at 60fps on a high-end PC might drop to 30fps on older systems because the procedural generation was tuned for cutting-edge hardware. This is a real trade-off: visual ambition versus hardware accessibility.

Second: the repetition paradox. Despite procedural variation, players can still detect patterns if the rules aren’t sophisticated enough. If your rock formations use the same underlying shape with slight rotation and scale variation, players will notice the repetition after an hour of gameplay. Procedural generation can feel soulless if it’s lazy—using simple randomization instead of authored rules. This is the critical distinction: bad procedural generation feels worse than hand-crafted because it’s obviously algorithmic. Good procedural generation feels hand-crafted because the rules are sophisticated and authored by artists who understand composition and design. No Man’s Sky’s launch in 2016 proved this—planets were algorithmically unique but aesthetically repetitive because the procedural rules lacked human art direction. The procedural generation was too random, with insufficient authored rules to create truly distinct biomes. Players felt the difference immediately: “This planet is unique but it feels like all the other planets.” Subsequent updates added more sophisticated rules and hand-authored biome templates, fixing the issue.

Third: the learning curve. Houdini is not beginner-friendly. It’s a professional tool with a steep learning curve. Developers transitioning from traditional game art pipelines need months to become proficient. This is why studios are hesitant to fully commit to procedural-first development—they need to retrain staff or hire specialists. For indie developers, the free license is valuable, but the time investment is significant. An artist might spend 3-6 months becoming competent with Houdini before shipping a procedurally generated game.

Fourth: loss of human artistic intent. Some game environments benefit from hand-crafted specificity. A dramatic boss arena, a narrative-critical location, a visually iconic space—these benefit from human art direction that no procedural system can replicate. Procedural generation is best for bulk content (forests, terrain, repetitive structures), not for story-critical moments. Studios that go all-in on procedural generation risk creating worlds that feel technically impressive but emotionally hollow. The most successful implementations—like Control and Star Wars Outlaws—use procedural generation strategically, reserving hand-crafted design for narrative moments and visual set pieces.

What Comes Next: The Future of Procedural AI in Games

The near-term evolution is clear: tighter integration between procedural generation and neural networks. Imagine an artist setting up a procedural generation graph, and an AI system suggesting parameter ranges based on what “looks good” in similar games. This isn’t replacing the artist—it’s augmenting their decision-making. The artist still authors the rules, but AI handles the tedious parameter tuning. Epic Games is actively researching this, and you’ll see shipping examples within 18 months. The workflow becomes: artist defines rules → AI suggests parameters → artist refines → output. It’s faster than today’s manual iteration.

Real-time procedural generation during gameplay is also coming. Currently, procedural generation happens in the editor—you generate assets, bake them into the level, and ship them static. The next frontier is generating assets on-demand during gameplay. Imagine a roguelike where every floor is procedurally generated not in the editor, but as you descend. Or an open-world game where you can procedurally generate dungeons on the fly when you discover them, with no loading screens. This requires massive optimization work, but studios like Remedy and Supermassive Games are experimenting with it. UE5’s Nanite and Lumen make this feasible in ways that weren’t possible five years ago.

Integration with dynamic AI systems is the longer-term play. Procedurally generated environments paired with procedurally generated NPC behavior creates worlds that feel alive. Imagine a procedurally generated city where NPCs have procedurally generated routines, relationships, and backstories. The world isn’t just visually varied—it’s behaviorally varied. This is where procedural generation stops being a graphics tool and becomes a game design tool. Studios are exploring this, but it’s still early. The technical and design challenges are massive.

The industry adoption timeline: 2024-2025 is when mid-tier studios widely adopt Houdini UE5 pipelines. 2025-2026 is when AI-assisted procedural generation becomes standard. 2027+ is when real-time procedural generation during gameplay becomes viable for AAA titles. The trajectory is clear: procedural generation is moving from “nice-to-have optimization” to “core game development practice.”

The verdict: Procedural generation powered by Houdini and UE5 is reshaping game development economics—trading artist time for algorithmic sophistication, and the industry is betting heavily that the trade-off is worth it.

Frequently Asked Questions

Does procedural generation make game worlds feel more realistic or just repetitive?

It depends entirely on execution. Procedural generation with sophisticated authored rules—like Remedy’s Control or Star Wars Outlaws—creates environments that feel more realistic because they have natural variation and density. Procedural generation with lazy randomization—like No Man’s Sky at launch—feels obviously algorithmic and repetitive. The difference is whether the artist invested in the ruleset or just threw random parameters at the generator.

Which games are actually using Houdini and UE5 procedural generation right now?

Remedy Entertainment integrated Houdini into Control’s development pipeline for architectural generation. Star Wars Outlaws uses procedural generation for open-world city layouts and architectural details. Helldivers 2 uses procedural level generation for mission arenas. Several unannounced AAA titles are in development with Houdini-first pipelines. The ecosystem is still early, but adoption is accelerating in 2024-2025.

Can I use Houdini and UE5 to create my own procedural game environments?

Yes. Houdini Indie is free for projects under $100K revenue, and Houdini Engine for Unreal is free within UE5. The barrier to entry is zero cost but high time investment—Houdini has a steep learning curve. Expect 2-3 months of serious study before you’re proficient. But if you’re willing to invest that time, indie developers can absolutely create AAA-quality procedurally generated environments like those in Control or Star Wars Outlaws. The tools are accessible; the skill barrier is real.

How does procedural generation affect game performance and file size?

Paradoxically, procedural generation can improve performance compared to hand-modeled assets at the same density level because Nanite virtualizes geometry. File size can be smaller because you’re storing rules and parameters instead of millions of unique polygons. However, if you generate more assets than you would’ve hand-crafted, performance can degrade. The key is balance: use procedural generation to create density you couldn’t achieve manually, but still optimize aggressively. Helldivers 2 demonstrates this—procedurally generated levels maintain consistent performance despite massive environmental variation.

Will procedural AI replace human environment artists?

No, but it will change their role. Instead of spending 80% of time on manual modeling and placement, environment artists will spend 80% of time designing procedural systems and refining rules. The demand for artists is shifting from polygon-pushers to systems designers. Studios still need human creativity and art direction—procedural generation is a tool that amplifies it, not replaces it. Artists who learn Houdini become more valuable, not obsolete.